Perform area estimation analysis with SEPAL-CEO#

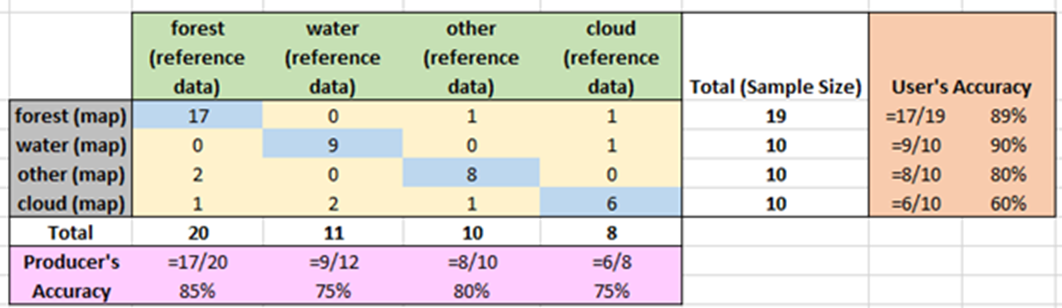

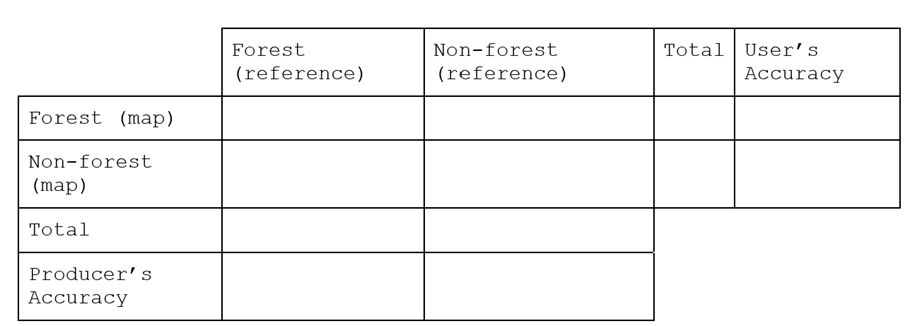

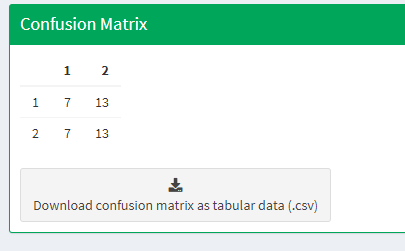

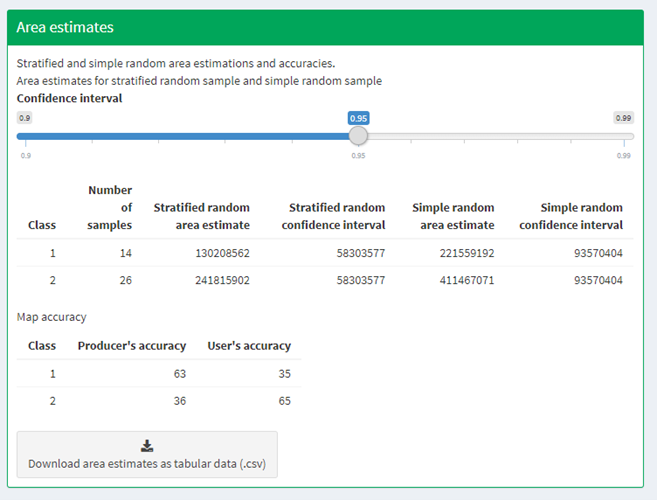

Complete area estimation for land use/land cover and two-date change detection classifications

Note

The SEPAL team would like to thank SIG-GIS for this documentation material (SIG-GIS refers to the Spatial Informatics Group - Geographic Information System).

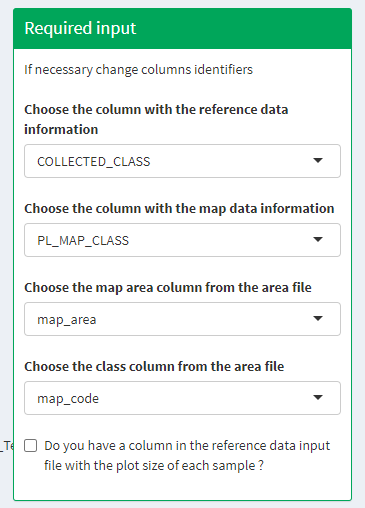

Important

To follow this tutorial, you need to register to:

SEPAL

Google Earth Engine (GEE)

Collect Earth Online (CEO)

Introduction#

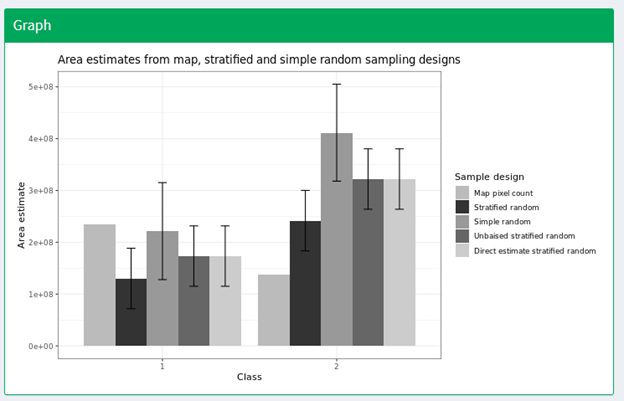

In this article, you will learn how to perform area estimation for land use/land cover and two-date change detection classifications. We will use sample-based approaches to area estimation. This approach is preferred over pixel-counting methods because all maps have errors (e.g. maps derived from land cover/land use classifications may have errors due to pixel mixing or noise in the input data). Using pixel-counting methods will produce biased estimates of area; you cannot tell whether these are overestimates or underestimates. Sample-based approaches create unbiased estimates of area and the error associated with your map.

The goal of this article is to teach you how to perform these tasks so that you can conduct your own area estimation for land use/land cover or change detection classifications.

In this article, you will find four modules covering methods and one module covering the documentation needed for replicating these methods. The modules are as follows:

In Module 1, you will learn how to generate mosaics based on satellite imagery in SEPAL. You will learn how to build these mosaics by selecting different data sources and images based on dates and cloud cover.

In Module 2, you will learn how to perform a land use/land cover image classification using random forest methods. You will learn how to define your land uses and land covers, collect training data, and run your model.

In Module 3, you will learn how to perform image change detection. Building on skills from Module 1 and Module 2, you will define what change looks like, collect training data, and run your model. You will also learn about different tools used to perform time-series analysis.

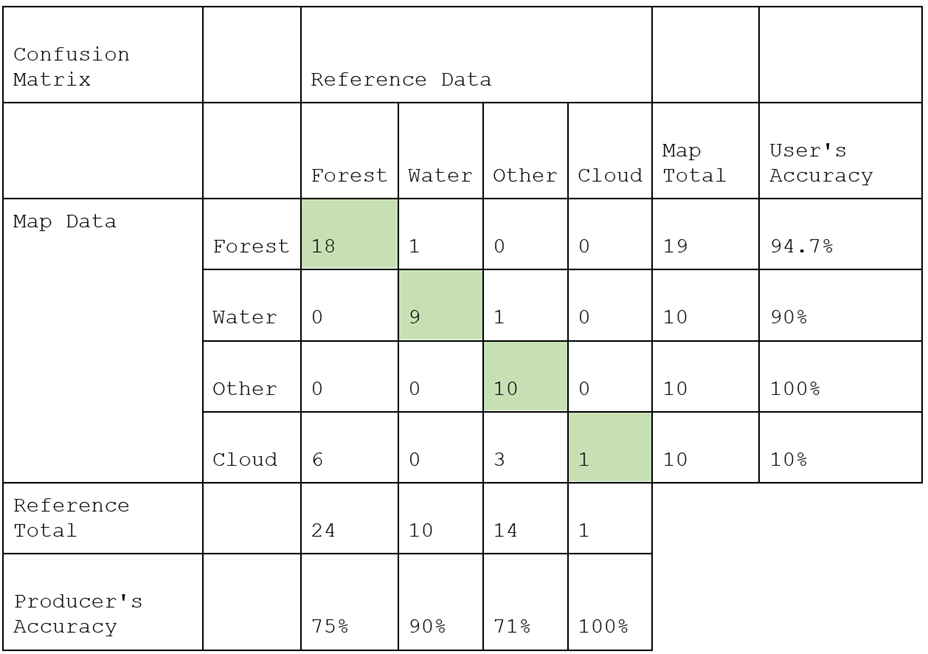

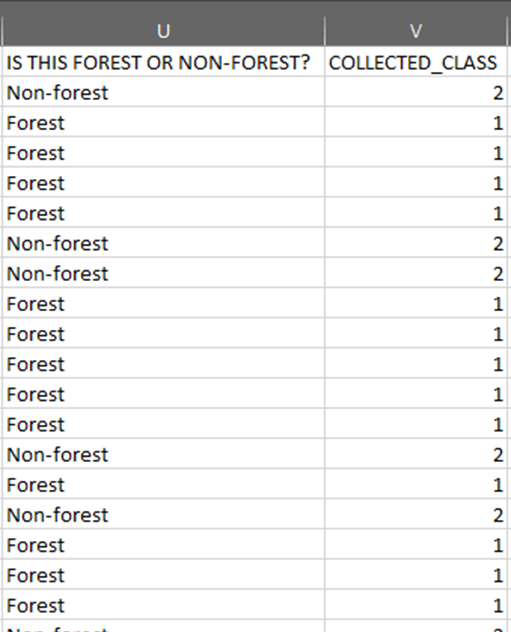

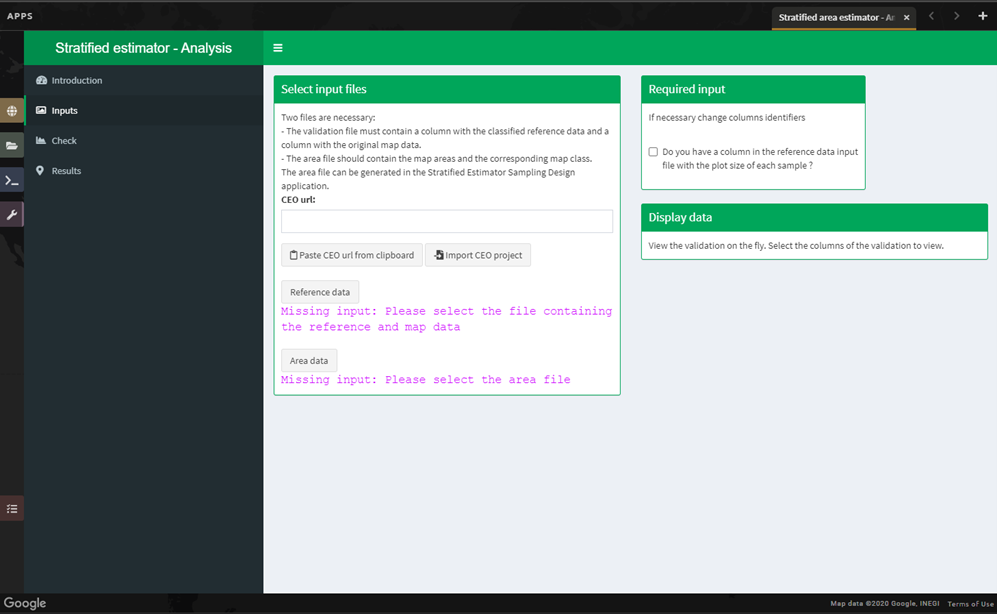

In Module 4, you will learn how to calculate a sample-based estimate of area and error. You will learn how to use stratified random sampling and verification image analysis to calculate area and error estimates based on the classification you create in Module 2. You will also learn about some key documentation steps in preparation for Module 5.

In Module 5, you will learn about documenting and archiving your area estimation project. The information in this step is required for your project to be replicated by yourself or your colleagues in the future (either for additional areas or points in time).

These exercises include step-by-step directions and are built to facilitate learning through reading and practice. This article will be accompanied by short videos, which will visually illustrate the steps described in the text.

![digraph process {

mosaic [label="Mosaic image creation", href="#mosaic-generation-landsat-sentinel-2", shape=box];

classif [label="Image classification", href="#image-classification", shape=box];

change [label="Two-date change detection", href="#image-change-detection", shape=box];

sample [label="Sample-based area and error", href="#sample-based-estimation-of-area-and-accuracy", shape=box];

doc [label="Documentation", href="#documentation-and-archiving", shape=box];

mosaic -> classif;

mosaic -> change;

classif -> sample;

change -> sample;

sample -> doc;

}](../_images/graphviz-822e05f396072876b47588fdbbf3a23d3e0728b8.png)

Required tools#

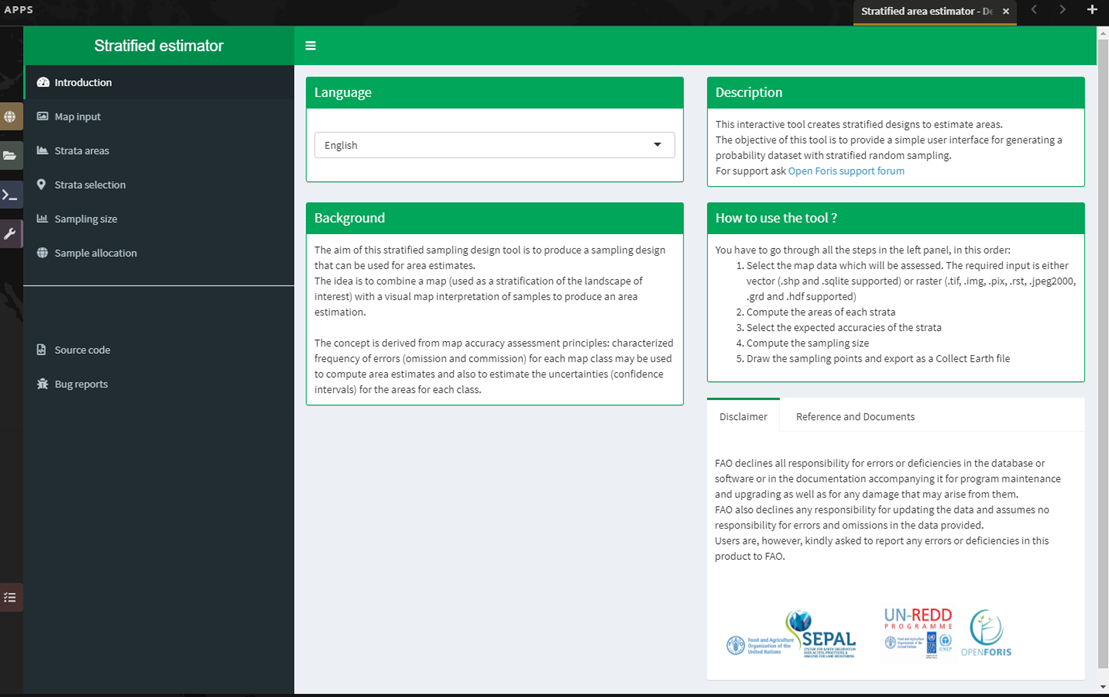

SEPAL#

The primary tool needed to complete this tutorial is the System for Earth Observation Data Access, Processing and Analysis for Land Monitoring (SEPAL) – a web-based cloud computing platform that enables users to create image composites, process images, download files, create stratified sampling designs and more (all from your browser). Part of the Open Foris suite of tools, SEPAL was designed by the Food and Agricultural Organization of the United Nations (FAO) to aid in remote sensing applications in developing countries. Geoprocessing is possible via Jupyter, JavaScript, R, R Shiny apps, and RStudio; the platform also integrates GEE and CEO.

SEPAL provides a platform for users to access satellite imagery (Landsat and Sentinel-2) and perform change detection and land cover classifications using a set of easy-to-use tools. SEPAL was designed to be used in developing countries where internet access is limited and computers are often outdated and inefficient for processing satellite imagery. It achieves this by utilizing a cloud-based supercomputer, which enables users to process, store and interpret large amounts of data. (There are many advanced functions available in SEPAL, which we will not cover in this article.)

CEO#

CEO is a free, open-source image viewing and interpretation tool, suitable for projects requiring information about land cover and/or land use, enabling simultaneous visual interpretations of satellite imagery, providing global coverage from MapBox and Bing Maps, a variety of satellite data sources from GEE, and the ability to connect to your own Web Map Service (WMS) or Web Map Tile Service (WMTS). The full functionality is implemented online; no desktop installation is necessary. CEO allows institutions to create projects and enables their teams to collect spatial data using remote sensing imagery. Use cases include historical and near real-time interpretation of satellite imagery and data collection for land cover/land use model validation.

GEE#

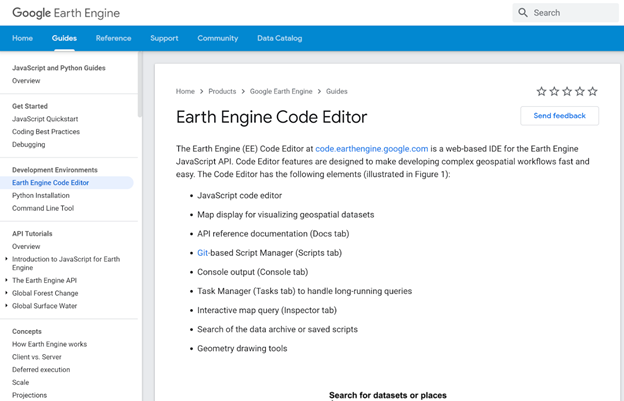

GEE combines a multi-petabyte catalog of satellite imagery and geospatial datasets with planetary-scale analysis capabilities and makes it available for scientists, researchers and developers to detect changes, map trends and quantify differences on the Earth’s surface. The code portion of GEE (called Code editor) is a web-based IDE for the GEE JavaScript API; its features are designed to make developing complex geospatial workflows fast and easy.

The Code editor has the following elements:

JavaScript code editor;

a map display for visualizing geospatial datasets;

an API reference documentation (Docs tab);

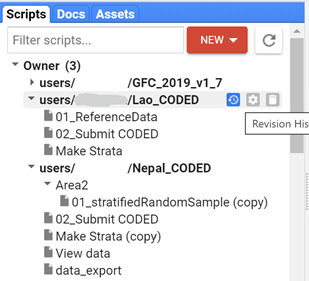

Git-based script manager (Scripts tab);

Console output (Console tab);

Task manager (Tasks tab) to handle long-running queries;

Interactive map query (Inspector tab);

search of the data archive or saved scripts; and

geometry drawing tools.

Tip

For more information, see:

Project planning#

Project planning and methods documentation play a key role in any remote sensing analysis project. While we use example projects in this article, you may use these techniques for your own projects in the future.

We encourage you to think about the following items to ensure that your resulting products will be relevant and that your chosen methods are well documented and transparent:

Descriptions and objectives of the project (issues and information needs).

Are you trying to conform to an Intergovernmental Panel on Climate Change (IPCC) Tier?

Descriptions of the end user product (data, information, monitoring system or map that will be created by the project).

What type of information do you need (e.g. map, inventory, change product)?

Do you need to know where different land cover types exist or do you just need an inventory of how much there is?

How will success be defined for this project? Do you require specific accuracy or a certain level of detail in the final map product?

Description of the project area/extent (e.g. national, subnational, specific forest).

Description of the features/classes to be modeled or mapped.

Do you have a national definition of “forest”?

Are you aware of the IPCC guidelines for the recommended land-use classes and how they will relate to mapping land cover?

Do you have key categories that will drive different analysis techniques?

Considerations for measuring, reporting and verifying your data.

Do you have a strategy? Do you know what is required? Do you know where to get the required information? Looking ahead, are you on the right path? Who are the decision makers that will inform these strategies?

What field data will be required for classification and accuracy assessment?

Do you have an existing National Forest Monitoring System (NFMS) in place?

Will you supplement your remote sensing project with existing data (local data on forest type, management intent, records of natural disturbance, etc.)?

Partnerships (vendors, agencies, bureaus, etc.).

Mosaic generation (Landsat & Sentinel 2)#

SEPAL provides a robust interface for generating Landsat and Sentinel 2 mosaics. Mosaic creation is the first step for the image classification and two-date change detection processes covered in Module 2 and Module 3, respectively. These mosaics can be downloaded locally or to your Google Drive account.

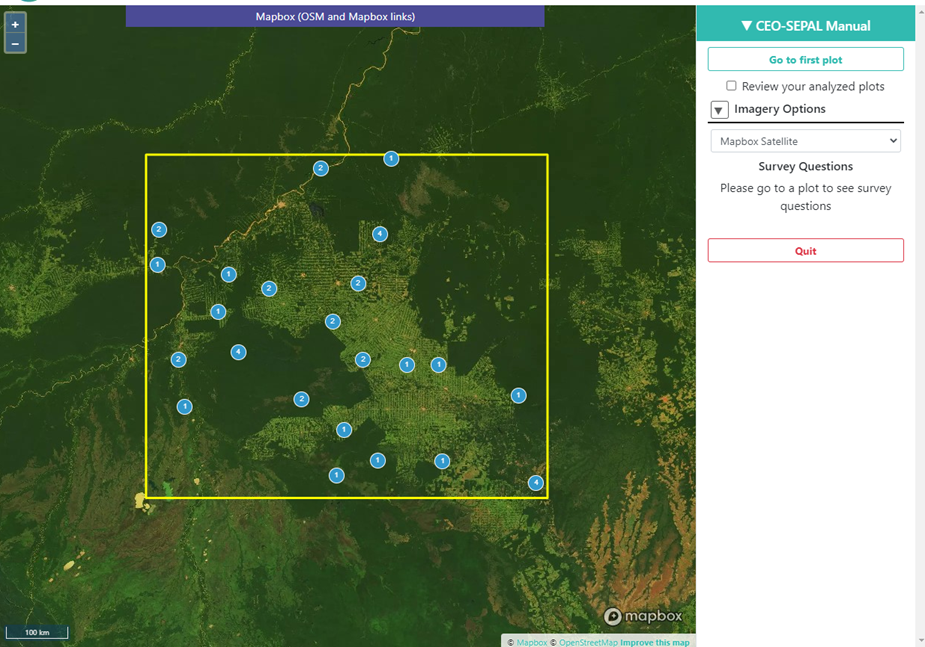

In this tutorial, you will create a Landsat mosaic for the Mai Ndombe region of the Democratic Republic of the Congo, where REDD+ projects are currently underway.

Note

Objectives

learn how to create an image mosaic;

familiarize yourself with a variety of options for selecting dates, sensors, mosaicking and download options; and

create a cloud-free mosaic for 2016.

Note

Prerequisites

SEPAL account registration

Create a Landsat mosaic#

If SEPAL is not already open, open your browser and go to: https://sepal.io/ . Log in to your SEPAL account.

Select the Processing tab.

Then, select Optical Mosaic.

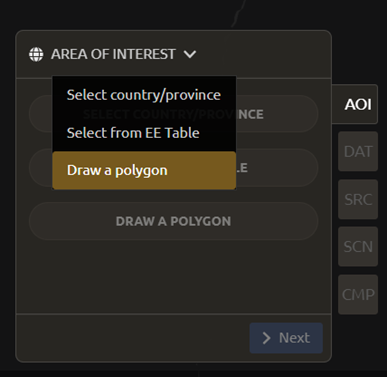

When the Optical mosaic tab opens, you will see an Area of interest (AOI) window in the lower-right corner of your screen.

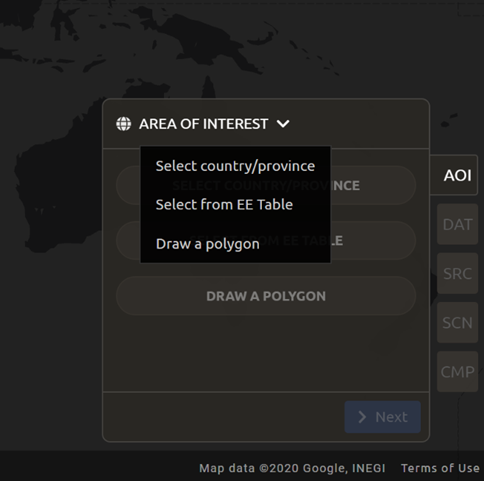

There are three ways to choose your AOI. Open the menu by selecting the carrot on the right side of the window label.

Select Country/Province (the default)

Select from EE table

Draw a polygon

We will use the Select a country/province option.

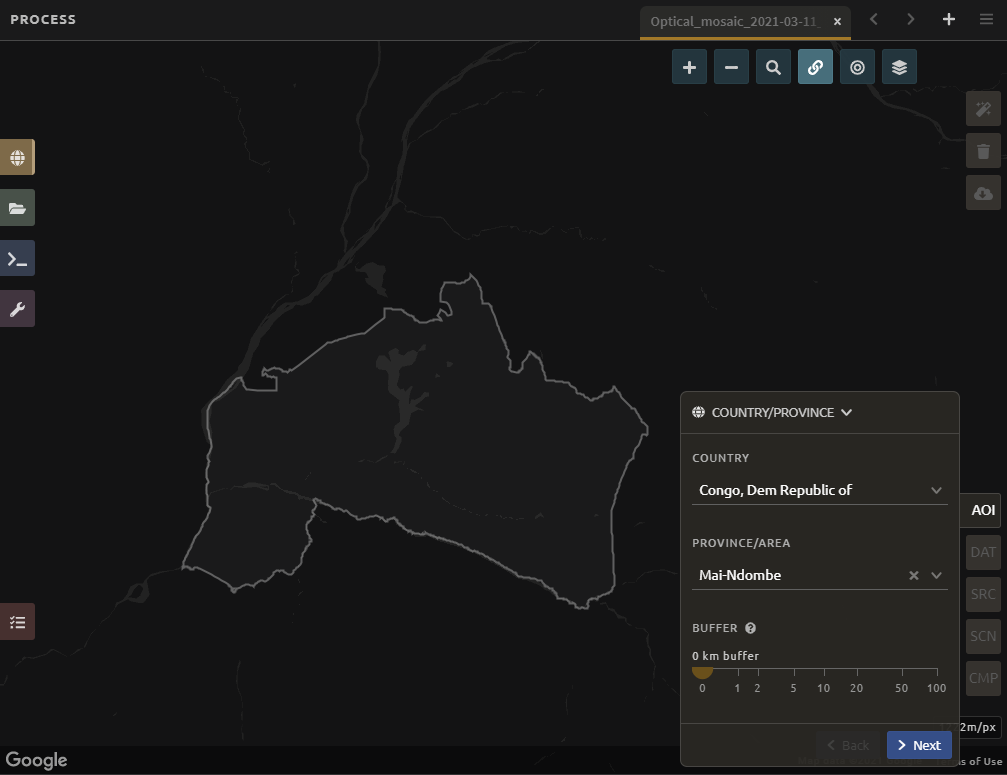

In the list of countries, scroll down until you see the available options for Congo, Dem Republic of (note: There is also the Republic of Congo, which is not what we’re looking for).

Note

Under Province/Area, notice that there are many different options.

Select Mai-Ndombe.

Tip

Optional: You can add a Buffer to your mosaic. This will include an area around the province of the specified size in your mosaic.

Select Next.

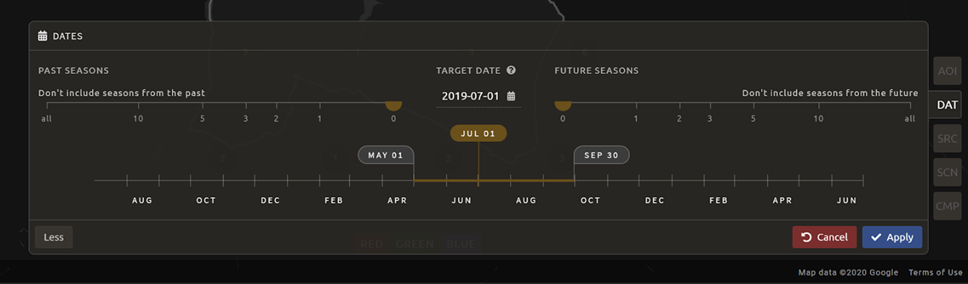

In the Date menu, you can choose the Year you are interested in or select More.

This interface allows you to refine the dates or seasons you are interested in.

You can select a

target date(the date in which pixels in the mosaic should ideally come from), as well as adjust the start- and end-date flags.You can also include additional seasons from the past or the future by adjusting the

Past SeasonsandFuture Seasonsslider. This will include additional years’ data of the same dates specified (if you’re interested in August 2015, including one future season will also include data from August 2016). (This is useful if you’re interested in a specific time of year, but there is significant cloud cover.)For this exercise, let’s create imagery for the dry season of 2019.

Select July 1 of 2019 as your target date (2019-07-01), and move your date flags to May 1-September 30.

Select

Apply.

Now select the Data Sources (SRC) you’d like. Here, select the Landsat L8 & L8 T2 option. The color of the label turns brown once it has been selected. Select Done.

L8 began operating in 2012 and is continuing to collect data.

L7 began operating in 2001, but has a scan-line error that can be problematic for dates between 2005-present.

L4-5 TM, collected data from July 1982-May 2012.

Sentinel 2 A+B began operating in June 2015.

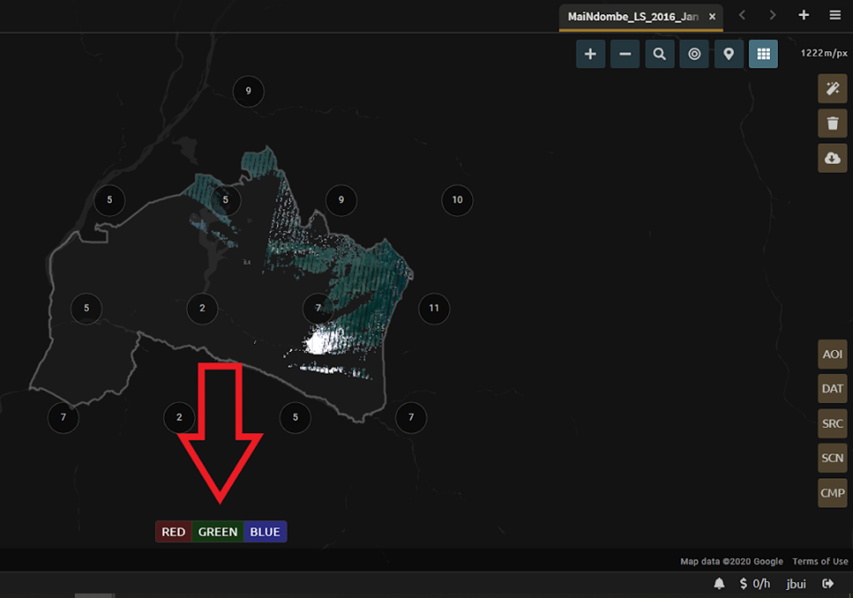

SEPAL will load a preview of your data. By default, it will show you where RGB band data is available. You can click on the RGB image at the bottom to choose from other combinations of bands or metadata.

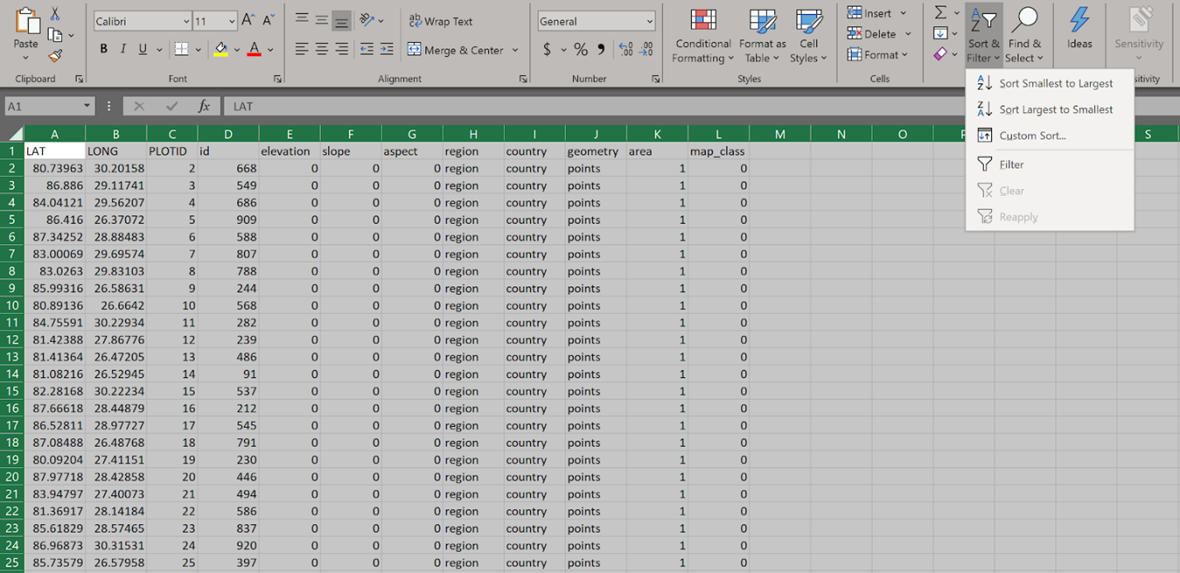

When it is done, examine the preview to see how much data is available. For this example, coverage is good. However, in the future when you are creating your own mosaic, if there is not enough coverage of your AOI, you will need to adjust your parameters. To do so, notice the five tabs in the lower right. You can adjust the initial search parameters using the first three of these tabs (e.g. select Dat to expand the date range). The last two tabs are for Scene selection and Composite, which are more advanced filtering steps. We’ll cover those now.

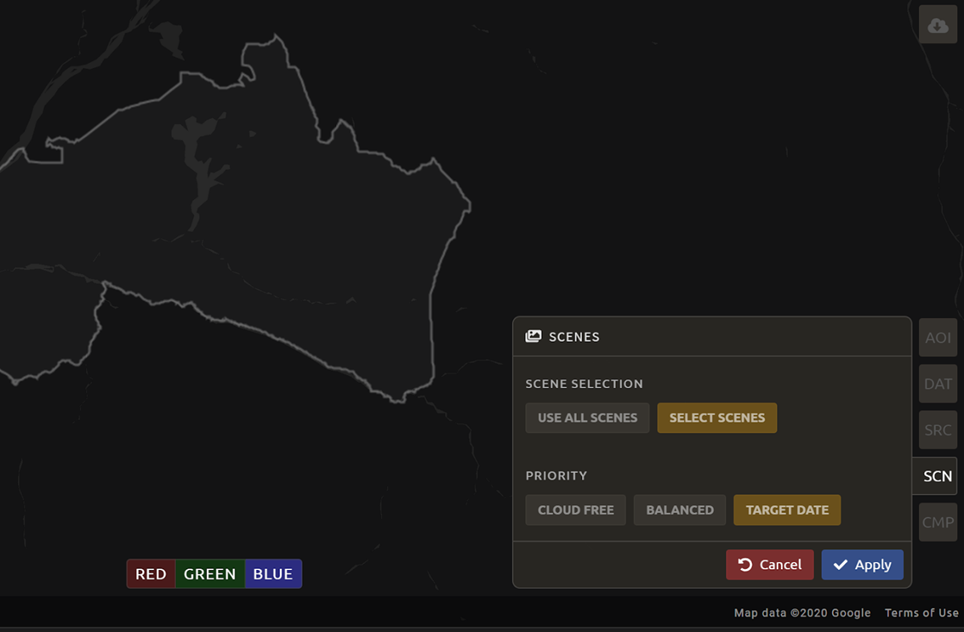

We’re now going to go through the Scene selection process. This allows you to change which specific images to include in your mosaic.

You can change the scenes that are selected using the

SCNbutton on the lower right of the screen. You can use all scenes or select which are prioritized. You can revert any changes by selectingUse All Scenesand thenApply.Change the Scenes by selecting Select scenes with Priority: Target date

Select Apply. The result should look like the image below.

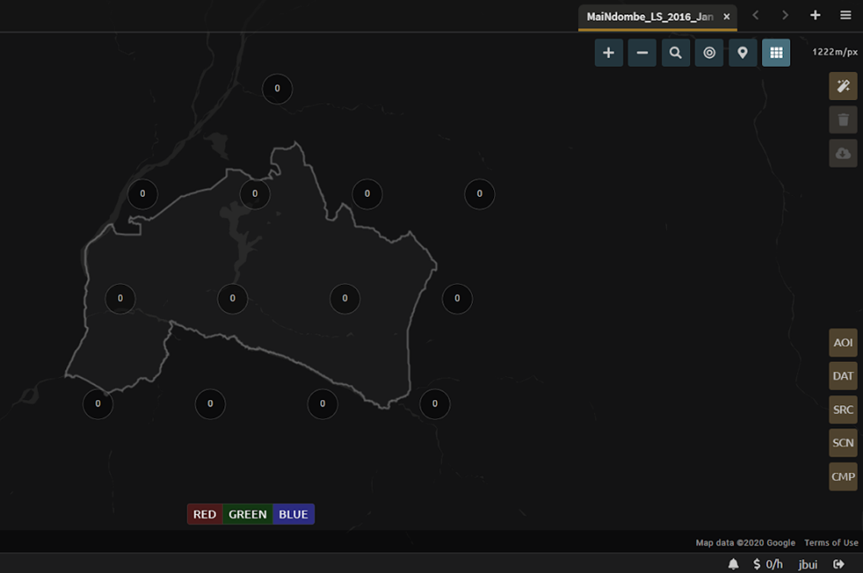

Note

Notice that the collection of circles over the Mai Ndombe study area are all populated with a zero. These represent the locations of scenes in the study area and the numbers of images per scene that are selected. The number is currently 0 because we haven’t selected the scenes yet.

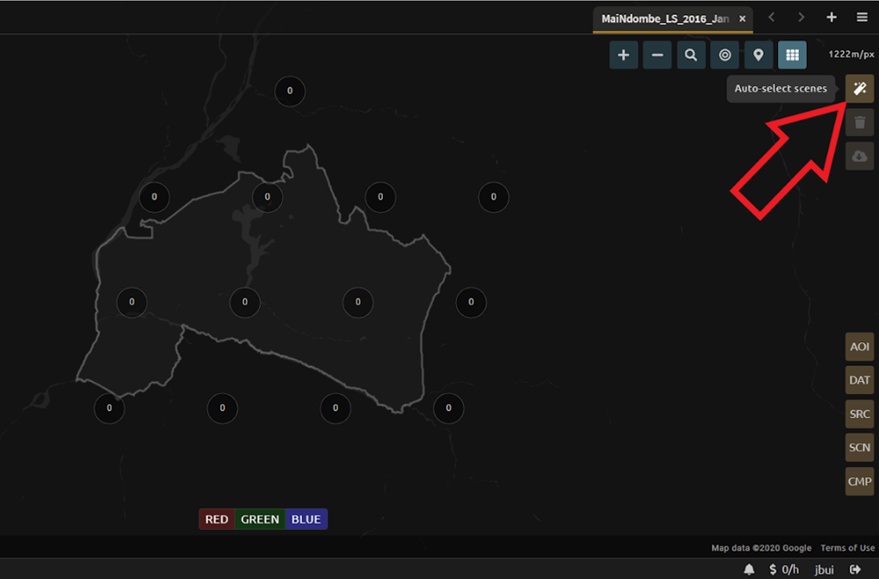

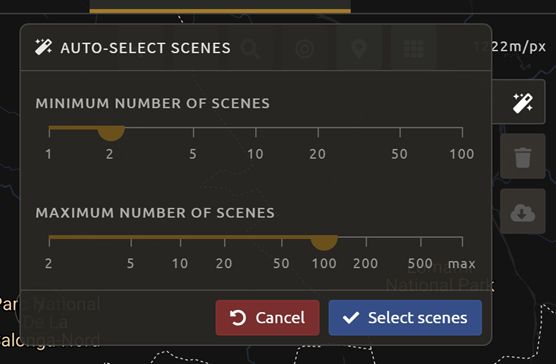

Choose the Auto-Select button to auto-select some scenes.

You may set a minimum and maximum number of images per scene area that will be selected. Increase the minimum to 2 and the maximum to 100. Choose Select Scenes. If there is only one scene for an area, that will be the only one selected despite the minimum.

You should now see imagery with overlaying circles, indicating how many scenes are selected.

You will notice that the circles that previously displayed a O now display a variety of numbers. These numbers represent the number of Landsat images per scene that meet your specifications.

Hover over one of the circles to see the footprint (outline) of the Landsat scene that it represents. Select that circle.

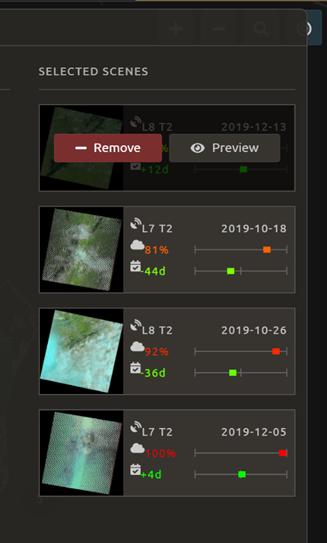

In the window that opens, you will see a list of selected scenes on the right side of the screen. These are the images that will be added to the mosaic. There are three pieces of information for each:

Satellite (e.g. L8, L7, L5 or L4)

Percent cloud cover

Number of days from the target date

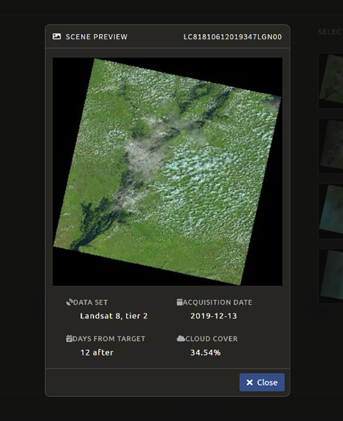

To expand the Landsat image, hover over one of the images and select Preview. Click on the image to close the zoomed-in graphic and return to the list of scenes.

To remove a scene from the composite, select the Remove button when you hover over the selected scene.

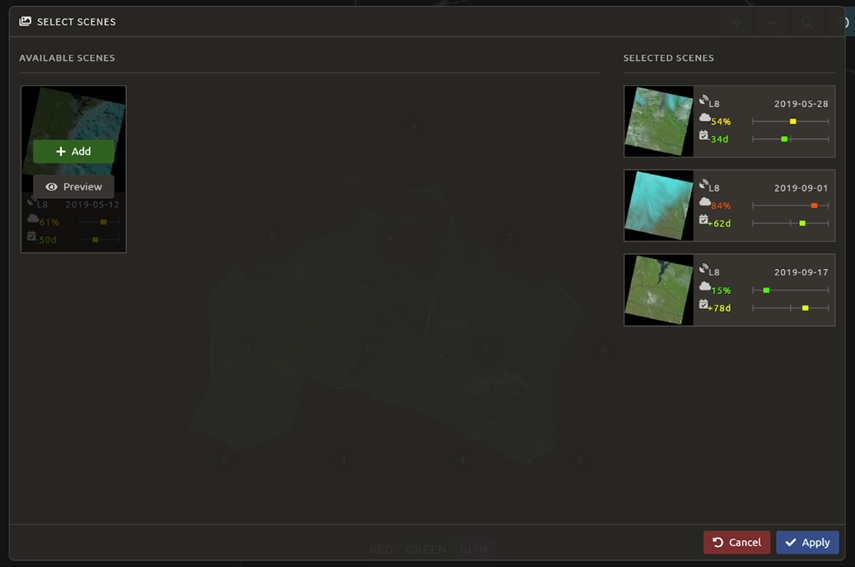

On the leftmost side, you will see Available scenes, which are images that will not be included in the mosaic, but can be added to it. If you have removed an image and would like to re-add it, or if there are additional scenes you would like to add, hover over the image and select Add.

Once you are satisfied with the selected imagery for a given area, select

Closein the lower-right corner.You can then select different scenes (represented by the circles) and evaluate the imagery for each scene.

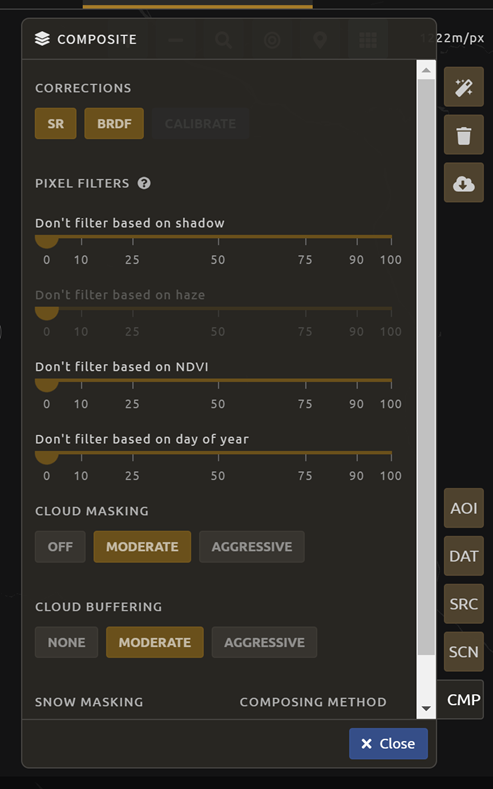

You can also change the composing method using the CMP button in the lower right.

Note

Notice that there are several additional options including shadow tolerance, haze tolerance, Normalized Difference Vegetation Index (NDVI) importance, cloud masking, and cloud buffering.

For this exercise, we will leave these at their default settings. If you make changes, select Apply after you’re done.

Now we’ll explore the Bands dropdown. Select Red|Green|Blue at the bottom of the page.

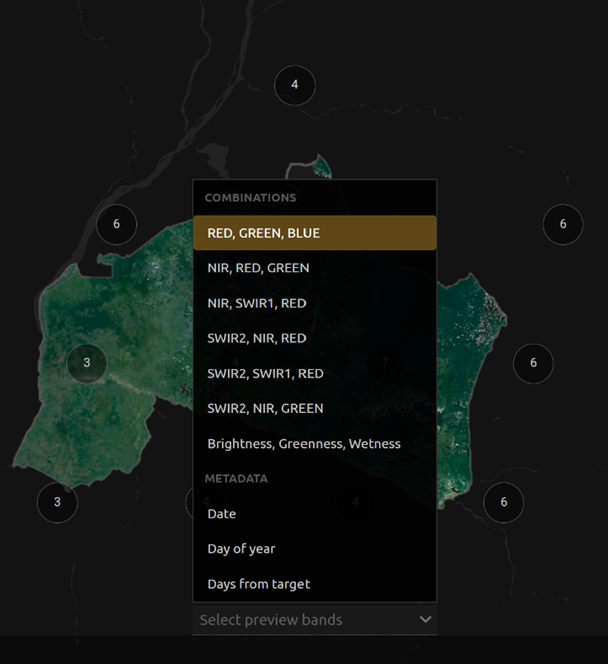

The dropdown menu will appear, as seen below.

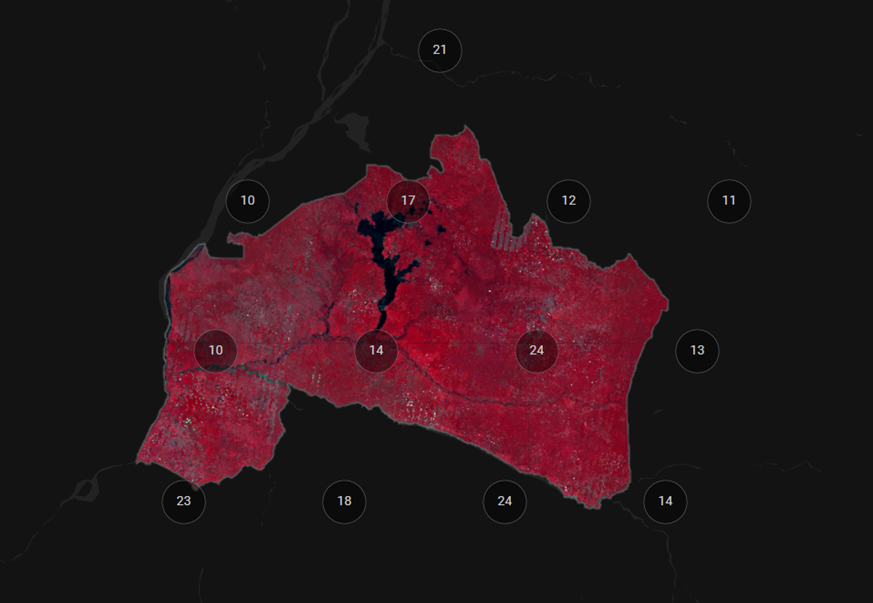

Select the NIR, RED, GREEN band combination (NIR stands for near infrared). This band combination displays vegetation as red (darker reds indicate dense vegetation); bare ground and urban areas appear grey or tan; water appears black.

Once selected, the preview will automatically show what the composite will look like.

Use the scroll wheel on your mouse to zoom in on the mosaic; then, click and move to pan around the image. This will help you assess the quality of the mosaic.

The map now shows the complete mosaic that incorporates all user-defined settings. Here is an example (yours may look different depending on which scenes you chose).

Using what you’ve learned, take some time to explore adjusting some of the input parameters and examine the influence on the output. Once you have a composite you are happy with, we will download the mosaic (instructions follow). For example, if you have too many clouds in your mosaic, then you may want to adjust some of your settings or choose a different time of year when there is a lower likelihood of cloud cover. The algorithm used to create this mosaic attempts to remove all cloud cover, but is not always successful in doing so. Portions of clouds often remain in the mosaic.

Name and save your recipe and mosaic#

Now, we will name the recipe for creating the mosaic and explore options.

Note

You will use this recipe when working with the classification or change detection tools, as well as when loading SEPAL mosaics into SEPAL’s CEO.

Tip

You can make the recipe easier to find by naming it. Select the tab in the upper right and enter a new name. For this example, use MiaNdombe_LS8_2019_Dry.

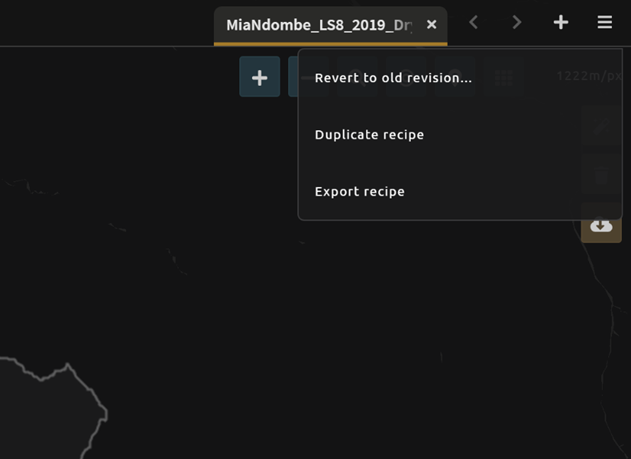

Let’s explore options for the recipe. Select the three lines in the upper-right corner:

You can Save the recipe (SEPAL will do this automatically on retrieval) so that it is available later.

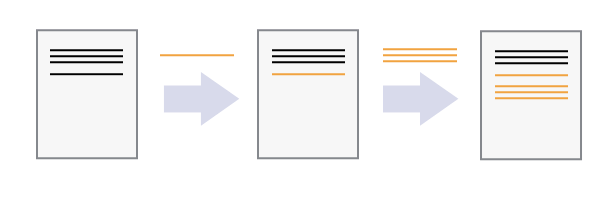

You can also Duplicate the recipe. This is useful for creating two years of data, which we will do in Module 3.

Finally, you can Export the recipe. This downloads a ZIP file with a JavaScript Object Notation (JSON) of your mosaic specifications.

Select Save recipe…. This will also let you rename the mosaic, if you choose.

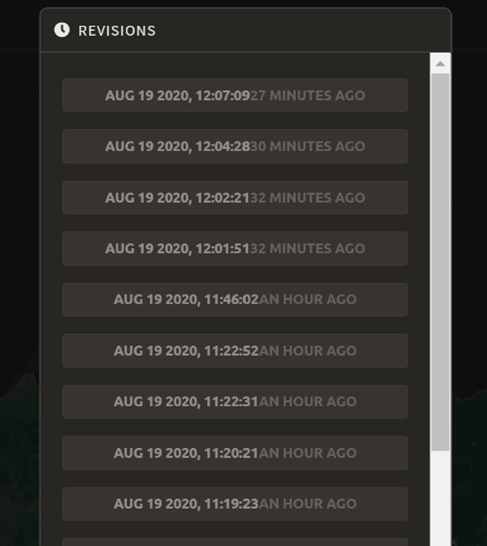

If you click on the three lines icon, you should see an additional option: Revert to old revision…

Choosing this option brings up a list of auto-saved versions from SEPAL. You can use this to revert changes if you make a mistake.

Tip

Now, when you open SEPAL and click the Search option, you will see a row with this name that contains the parameters you just set.

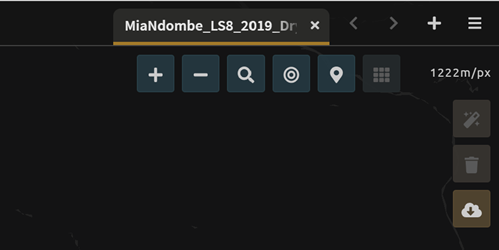

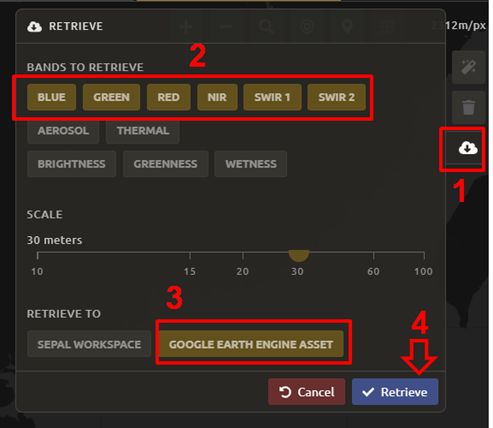

Finally, we will save the mosaic itself. This is called “retrieving” the mosaic. This step is necessary to perform analysis on the imagery.

To download this imagery mosaic to your SEPAL account, select the Retrieve button.

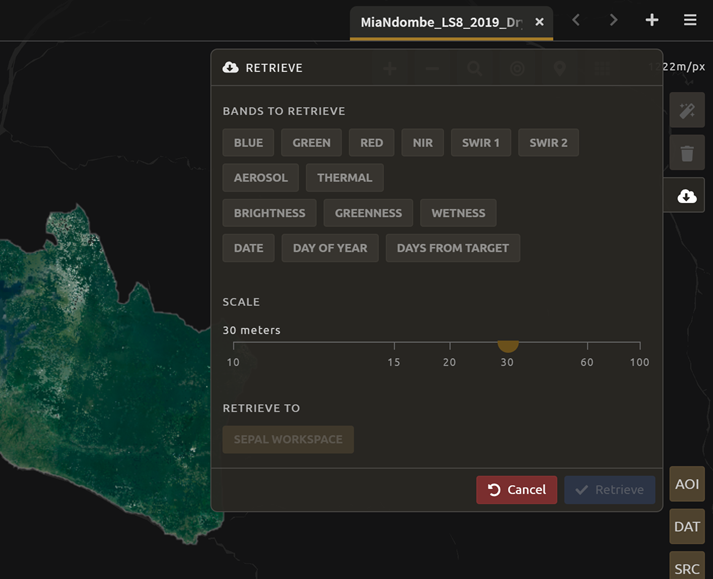

A window will appear with the following options:

Bands to Retrieve: select the desired bands you would like to include in the download.

Select the Blue, Green, Red, NIR, SWIR 1 and SWIR 2 bands. This will show you visible and infrared data collected by Landsat.

Other bands that are available include Aerosol, Thermal, Brightness, Greenness, and Wetness (more information on these can be found at: https://landsat.gsfc.nasa.gov/landsat-data-continuity-mission).

Metadata on Date, Day of Year, and Days from Target can also be selected.

Scale: The resolution of the mosaic. Landsat data is collected at 30 metre (m) resolution, so we will leave the slider there.

Retrieve to: The SEPAL workspace is the default option. Other options may appear depending on your permissions.

When you have the desired bands selected, select Retrieve.

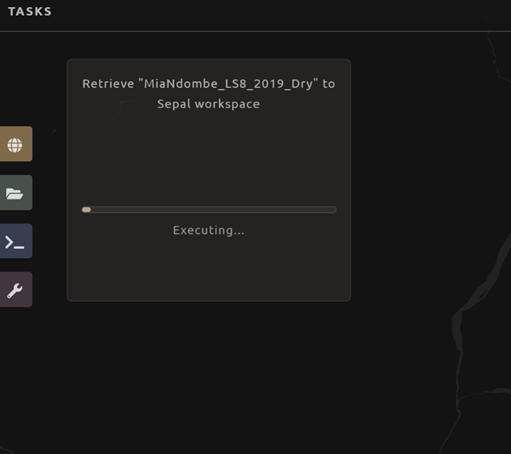

You will notice the Tasks icon is now spinning. If you select it, you will see that data retrieval is in process. This step will take some time.

Note

This will take approximately 25 minutes to finish downloading; however, you can move on to the next exercise without waiting for the download to finish.

Image classification#

The main goal of Module 2 is to construct a single-date land cover map by classification of a Landsat composite generated from Landsat images. Image classification is frequently used to map land cover, describing what the landscape is composed of (grass, trees, water and/or an impervious surface), and to map land use, describing the organization of human systems on the landscape (farms, cities and/or wilderness).

Learning to do image classification well is extremely important and requires experience. This module was designed to help you acquire some experience. You will first consider the types of land cover classes you would like to map and the amount of variability within each class. There are both supervised (using human guidance, including training data) and unsupervised (not using human guidance) classification methods. The “random forest approach” demonstrated here uses training data and is thus a supervised classification method.

There are a number of supervised classification algorithms that can be used to assign the pixels in the image to the various map classes. One way of performing a supervised classification is to utilize a machine learning (ML) algorithm. Machine learning algorithms utilize training data combined with image values to learn how to classify pixels. Using manually collected training data, these algorithms can train a classifier, and then use the relationships identified in the training process, to classify the rest of the pixels in the map. The selection of image values (e.g. NDVI, elevation) used to train any statistical model should be thoroughly thought out and informed by your knowledge of the phenomenon of interest to classify your data (e.g. by forest, water, clouds).

In this module, we will create a land cover map using supervised classification in SEPAL. We will train a random forest machine learning algorithm to predict land cover with a user-generated reference data set. This dataset is collected either in the field or manually through examination of remotely sensed data sources, such as aerial imagery. The resulting model is then applied across the landscape. You will complete an accuracy assessment of the map output in Module 4.

Before starting your classification, you will need to create a response design with details about each of the land covers/land uses that you want to classify (Exercise 2.1); create mosaics for your area of interest (Section 2.2; we use a region of Brazil); and collect training data for the model (Exercise 2.3). Then, in Exercise 2.4, we will run the classification and examine our results.

The workflow in this module has been adapted from exercises and material developed by Dr. Pontus Olofsson, Christopher E. Holden, and Eric L. Bullock at Boston Education in Earth Observation Data Analysis (BEEODA) in the Department of Earth & Environment at Boston University. To learn more about their materials and their work, visit their GitHub site at beeoda.

At the end of this module, you will have a classified land use/land cover map.

Note

This section takes approximately four hours to complete.

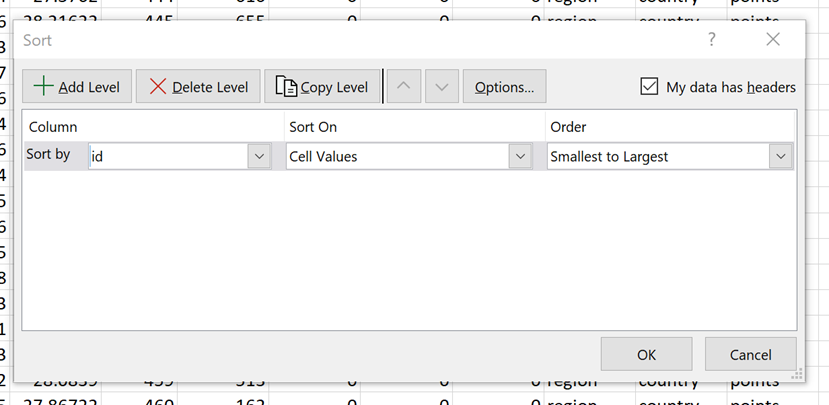

Response design for classification#

Creating consistent labeling protocols is necessary for creating accurate training data and accurate sample-based estimates (see Module 4). They are especially important when more than one researcher is working on a project and for reproducible data collection. Response design helps a user assign a land cover/land use class to a spatial point. The response design is part of the metadata for the assessment and should contain the information necessary to reproduce the data collection, laying out an objective procedure that interpreters can follow and that reduces interpreter bias.

In this exercise, you will build a decision tree for your classification, as well as a significant amount of the other documentation and decision points (for more information about decision points, see Section 5.1).

Objective:

Learn how to create a classification scheme for land cover/land use classification mapping.

Specify the classification scheme#

“Classification scheme” is the name used to describe the land cover and land use classes adopted. It should cover all of the possible classes that occur in the AOI. Here, you will create a classification scheme with detailed definitions of the land cover and land use classes to share with interpreters.

Create a decision tree for your land cover or land-use classes. There may be one already in use by your department. The tree should capture the most important classifications for your study (see following example).

This example includes a hierarchical component. The green and red categories have multiple sub-categories, which might be multiple types of forest, crops or urban areas. You can also have classification schemes that are all one level with no hierarchical component.

For this exercise, we’ll use a simplified land cover and land use classification, as in this graph:

![digraph process {

lc [label="Land cover", shape=box];

f [label="Forest", shape=box, style="filled" color="darkgreen"];

nf [label="Non-forest", shape=box, style="filled", color="grey"];

lc -> f;

lc -> nf;

}](../_images/graphviz-1fb635eedd54ea4cfa901b09d422fed8789ca33e.png)

When creating your own decision tree, be sure to specify if your classification scheme was derived from a template, including the IPCC land use categories, CORINE land cover (CLC), or land cover and land use, landscape (LUCAS).

If applicable, your classification scheme should be consistent with the national land cover and land use definitions.

In cases where the classification scheme definition is different from the national definition, you will need to provide a reason.

Create a detailed definition for each land cover and land use change class included in the classification scheme. We recommend that you include measurable thresholds.

Our classification will take place in an area of the Amazon rainforest undergoing deforestation in Brazil.

We’ll define Forest as an area containing more than 70% of tree cover.

We’ll define Non-forest as areas with less than 70% of tree cover. This will capture urban areas, water and agricultural fields.

For creating your own classifications, here are some things to keep in mind:

It is important to have definitions for each of the classes. A lack of clear definitions of the land cover classes can make the quality of the resulting maps difficult to assess and challenging for others to use. The definitions you come up with now will probably be working definitions that you find you need to modify as you move through the land cover classification process.

Note

As you become more familiar with the landscape, data limitations and the ability of the land cover classification methods to discriminate some classes better than others, you will undoubtedly need to update your definitions.

As you develop your definitions, you should be relating back to your applications. Make sure that your definitions meet your project objectives. For example, if you are creating a map to be used as part of your United Nations Framework Convention on Climate Change [UNFCCC] greenhouse gas reporting documents, you will need to make sure that your definition of forest meets the needs of this application.

Note

The above land cover tree is an excerpt of text from the Methods and Guidance from the Global Forest Observations Initiative (GFOI) document that describes the IPCC Good Practice Guidance (GPG) forest definition and suggestions to consider when drafting your forest definition (2003). When creating your own decision tree, be sure to specify if your definitions follow a specific standard, such as using ISO standard Land Cover Meta-Language (LCML, ISO 19144-2) or similar.

During this online training course, you will be mapping land cover across the landscape using the Landsat composite, a moderate resolution data set. You may develop definitions based on your knowledge from the field or from investigating high-resolution imagery; however, when deriving your land cover class definitions, it’s also important to be aware of how the definitions relate to the data used to model the land cover.

Note

You will continue to explore this relationship throughout the exercise. Will the spectral signatures between your land cover categories differ? If the spectral signatures are not substantially different between classes, is there additional data you can use to differentiate these categories? If not, you might consider modifying your definitions.

For additional resources, see http://www.ipcc.ch/ipccreports/tar/wg2/index.php?idp=132

Create a mosaic for classification#

We first need an image to classify before running a classification. For best results, we will need to create an optical mosaic with good coverage of our study area. We will build upon knowledge gained in Module 1 to create an optical mosaic in SEPAL and retrieve it in GEE.

In SEPAL, you can run a classification on either a mosaic recipe or on a GEE asset. It is best practice to run a classification using an asset, rather than on the fly with a recipe. This will improve how quickly your classification will export and avoid computational limitations.

Note

Objectives:

Build on knowledge gained in Module 1.

Create a mosaic to be the basis for your classification.

Creating and exporting a mosaic for a drawn AOI#

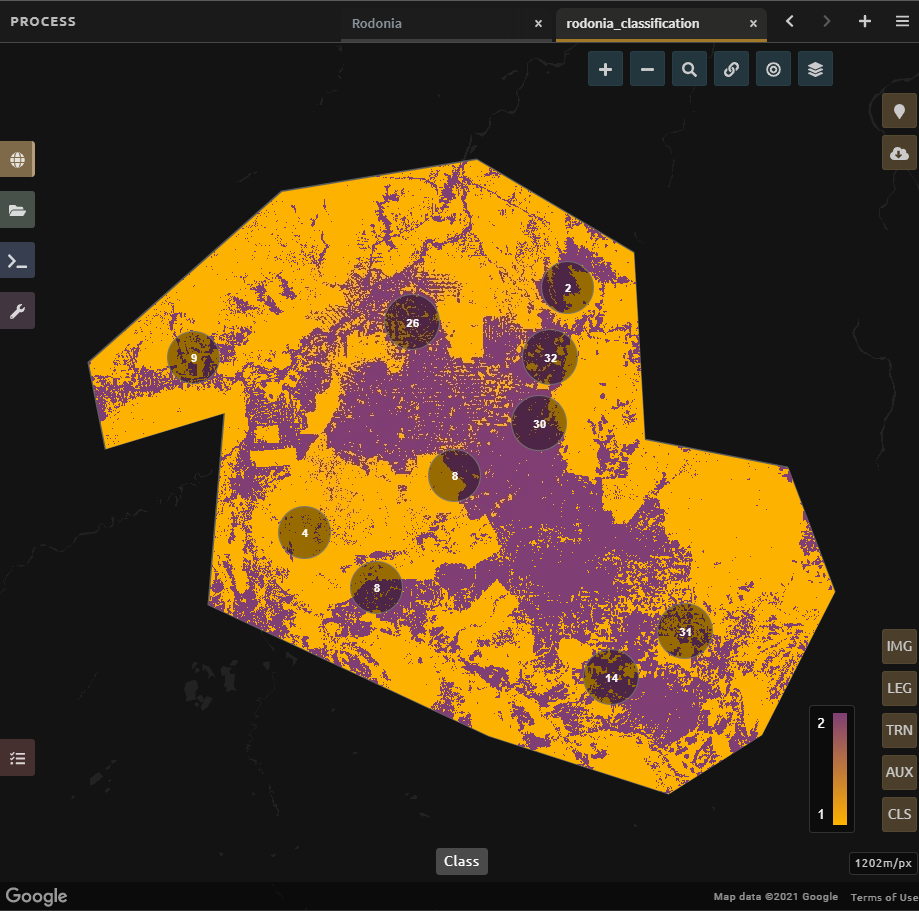

We will create a mosaic for an area in the Amazon basin. If any of the steps for creating a mosaic are unfamiliar, please revisit Module 1.

Navigate to the Process tab, then create a new optical mosaic by selecting Optical mosaic on the Process menu.

Under Area of Interest:

Choose Draw Polygon from the dropdown list.

Navigate using the map to the State of Rondonia in Brazil. Draw a polygon around it or draw a polygon within the borders (note: a smaller polygon will export faster).

Now use what you have learned in Module 1 to create a mosaic with imagery from the year 2019 (the entire year of a part of the year).

Tip

Don’t forget to consider which satellites and scenes you would like to include (all or some).

Your preview should include imagery data across your entire AOI. This is important for your classification. Try also to get a cloud-free mosaic, as this makes your classification easier.

Name your mosaic for easy retrieval. Try Module2_Amazon.

When you’re satisfied with your mosaic, retrieve it to GEE. Be sure to include the red, green, blue, nir, swir1, and swir2 layers. You may choose to add other layers (e.g. greenness) as well.

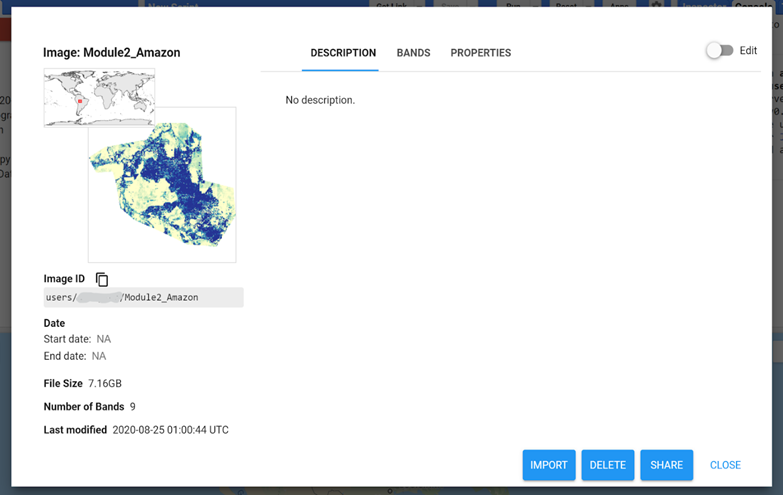

Finding your GEE asset#

For future exercises, you may need to know how to find your GEE asset.

Go to https://code.earthengine.google.com and sign in.

Select the Assets tab in the leftmost column.

Under Assets, look for the name of the mosaic you just exported.

Select the mosaic name.

A pop-up window will appear with information about your mosaic.

Select the two overlapping boxes icon to copy your asset’s location.

Creating a classification and training data collection#

In this exercise, we will learn how to start a classification process and collect training data. These training data points will become the foundation of the classification in Section 2.4. High-quality training data is necessary to get good land cover map results. In the most ideal situation, training data is collected in the field by visiting each of the land cover types to be mapped and collecting attributes. When field collection is not an option, the second best choice is to digitize training data from high-resolution imagery, or at the very least for the imagery to be classified.

In general, there are multiple pathways for collecting training data. To create a layer of points, using desktop GIS, including QGIS and ArcGIS, is one common approach. Using GEE is another approach. You can also use CEO to create a project of random points to identify (see detailed directions in Section 4.1.2). All of these pathways will create a .csv file or a GEE table that you can import into SEPAL to use as your training data set.

However, SEPAL has a built-in reference data collection tool in the classifier. In this exercise, we will use this tool to collect training data. Even if you use a .csv file or GEE table in the future, this is a helpful feature to collect additional training data points to help refine your model.

In this assignment, you will create training data points using high-resolution imagery, including Planet NICFI data. These will be used to train the classifier in a supervised classification using SEPAL’s random forests algorithm. The goal of training the classifier is to provide examples of the variety of spectral signatures associated with each class in the map.

Objective:

Create training data for your classes that can be used to train a machine learning algorithm.

Prerequisites:

SEPAL account;

Land cover categories defined in section 2.1; and

Mosaic created in section 2.2

Set up your classification#

In the Process menu, choose the green plus symbol and select Classification.

Add the Amazon optical mosaic for classification:

Select

+ Addand choose either Saved SEPAL Recipe or Earth Engine Asset (recommended).If you choose Saved SEPAL Recipe, select your Module 2 Amazon recipe.

If you choose Earth Engine Asset, enter the Earth Engine Asset ID for the mosaic. The ID should look like “users/username/Module2_Amazon”.

Tip

Remember that you can find the link to your Earth Engine Asset ID via GEE’s Asset tab (section 2.2).

Select bands: Blue, Green, Red, NIR, SWIR1 and SWIR2. You can add other bands as well if you included them in your mosaic.

You can also include Derived bands by selecting the green button in the lower left.

Select

Apply, then selectNext.

Attention

Selecting Saved SEPAL Recipe may cause the following error at the final stage of your classification:

Google Earth Engine error: Failed to create preview

This occurs because GEE gets overloaded. If you encounter this error, please retrieve your classification as described in Section 2.2.

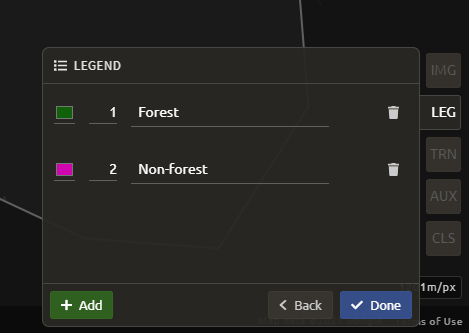

In the Legend menu, choose + Add, which creates a place for you to write your first class label.

You will need two legend entries.

The first should have the number 1 and a class label of Forest.

The second should have the number 2 and a class label of Non-forest.

Choose colors for each class as you see fit.

Select

Close.

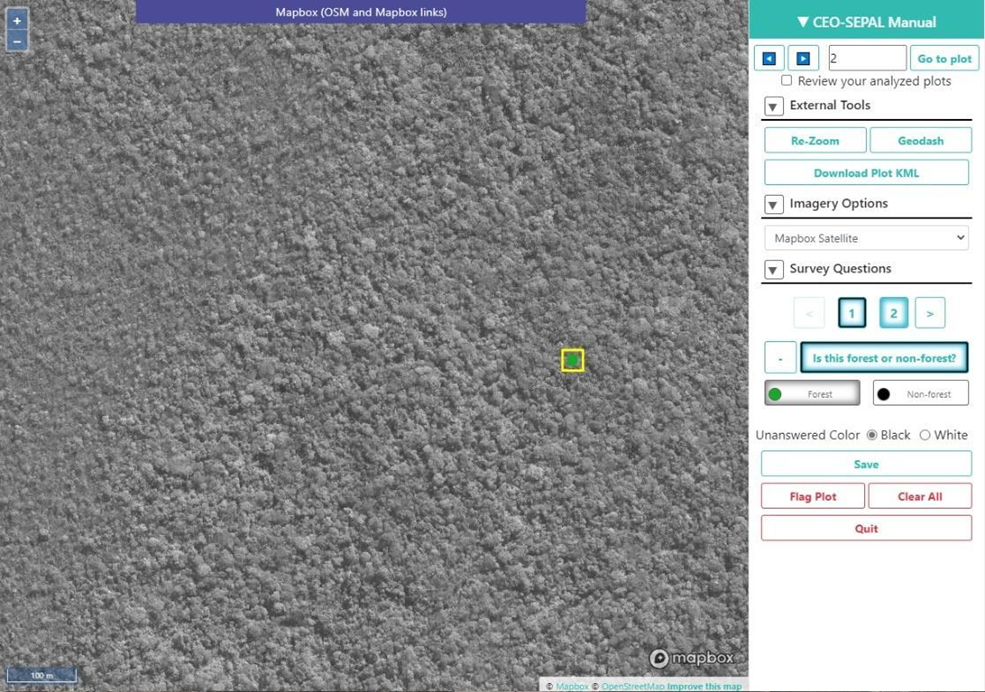

Collect training data points#

Now that you have created your classification, you are ready to begin collecting data points for each land cover class.

In most cases, it is ideal to collect a large amount of training data points for each class that capture the variability within each class and cover the different areas of the study area. However, for this exercise, you will only collect a small number of points (approximately 25 per class). When collecting data points, make sure that your plot contains only the land cover class of interest (no plots with a mixture of your land cover categories).

Tip

To help you understand why the random forest algorithm might get some categories you are trying to map confused with others, you will use spectral signature charts in CEO-SEPAL to look at the NDVI signature of your different land cover classes. You should notice a few things when exploring the spectral signatures of your land cover classes. First, some classes are more spectrally distinct than others. For example, water is consistently dark in the NIR and MIR wavelengths, and much darker than the other classes. This means that it shouldn’t be difficult to separate water from the other land cover classes with high accuracy.

Not all pixels in the same classes have the exact same values — there is some natural variability! Capturing this variation will strongly influence the results of your classification.

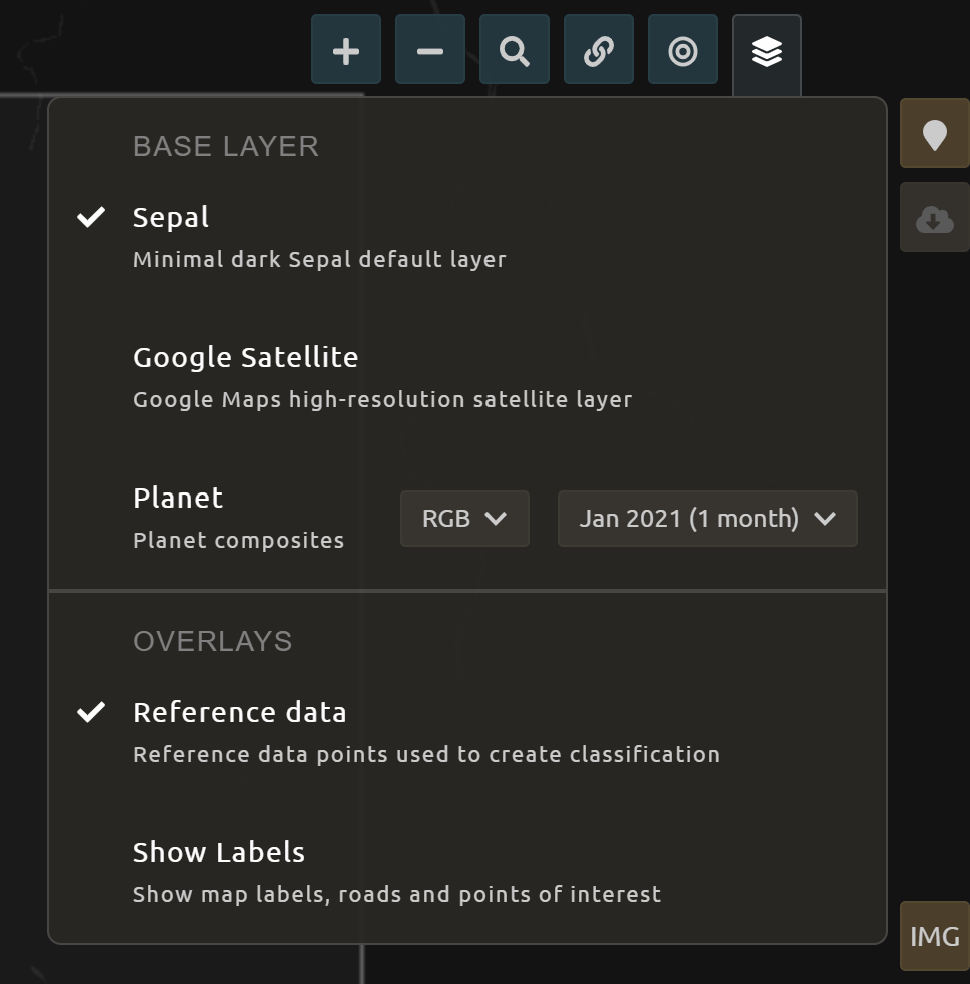

First, let’s become familiar with the SEPAL interface. In the upper-right corner of the map, there is a stack of three rectangles. If you hover over this icon, it says “Select layers to view.”

Note

Available base layers include SEPAL (minimal dark SEPAL default layer), Google Satellite, and Planet NICFI composites.

We will use the Planet NICFI composites for this example. The composites are available in either RGB or false color infrared (CIR). Composites are available monthly after September 2020 and for every 6 months prior from 2015.

Select RGB, Jun 2019 (6 months).

Tip

You can also select “Show labels” to enable labels that can help you orient yourself in the landscape.

Now select the point icon. When you hover over this icon, it says “Enable reference data collection.”

With reference data collection enabled, you can start adding points to your map.

Use the scroll wheel on your mouse to zoom in on the study area. You can drag to pan around the map. Be careful though, as a single click will place a point on the map.

Tip

If you accidentally add a point, you can delete it by selecting the red Remove button.

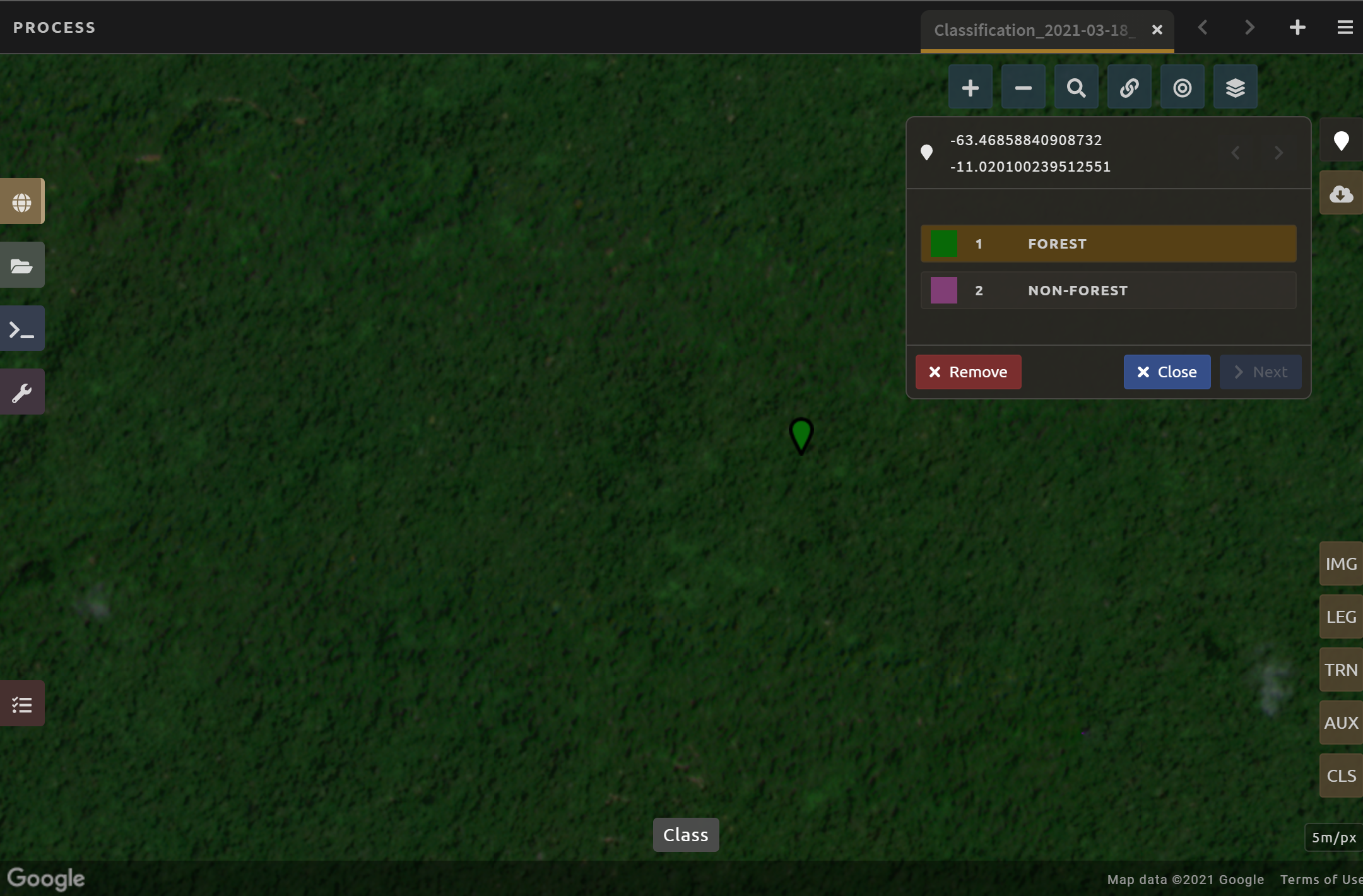

Now we will start collecting forest training data:

Zoom into an area that is clearly forested. When you find an area that is completely forested, click it once.

You have just placed a training data point!

Select the Forest button in the training data interface to classify the point.

Tip

If you haven’t classified the point yet, you can click somewhere else on the map instead of deleting the record.

Note

Ideally you should switch back to the Landsat mosaic to make sure that this forested area is not covered with a cloud. If you mistakenly classify a cloudy pixel as Forest, then the results will be impacted negatively in the event that your Landsat mosaic does have cloud-covered areas.

However, this interface does not allow for switching between the base layer imagery and your exported mosaic. If you are using another training data collection method, keep this point in mind.

If you need to modify the classification of any of your data points, you can select the point to return to the classification (or delete) options.

Begin collecting the rest of the 25 Forest training data points throughout other parts of the study area.

The study area contains an abundance of forested land, so it should be pretty easy to identify places that can be confidently classified as forest. If you’d like, use the Charts function to ensure that there is a relatively high NDVI value for the point.

Ensure you are placing data points within the extent of the mosaic (the state of Rondonia in Brazil).

Collect about 25 points for the Forest land cover class.

Attention

When you are done, zoom out to the full extent of the area. Did you place data points somewhat equally across the full region? Are all points clustered in the same region? It’s best to make sure you have data points covering the full spatial extent of the study region; add more points in areas that are sparsely represented, if needed.

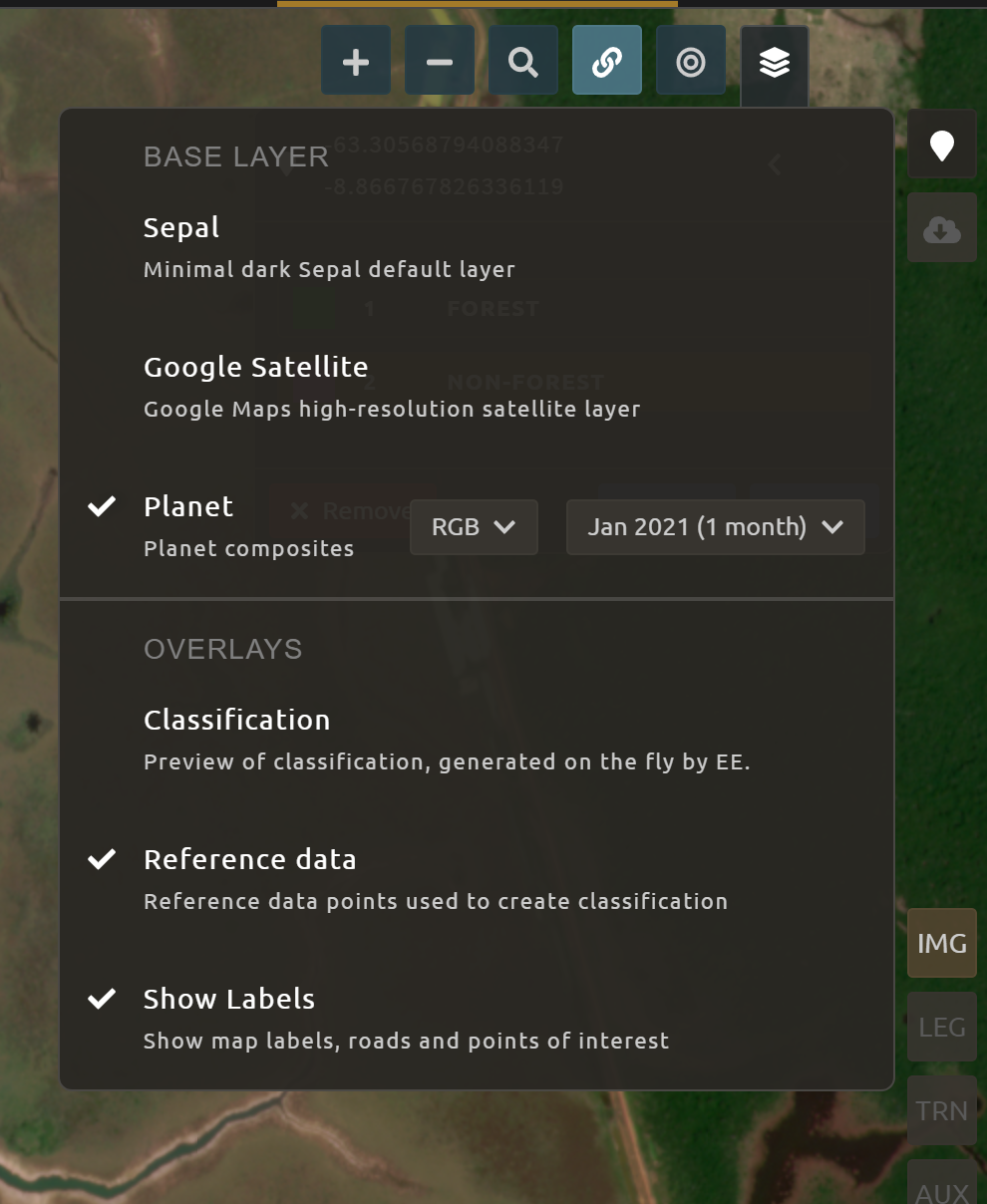

After you collect your training data for Forest, you may see the classification preview appear.

To disable the classification preview to continue to collect training data, return to the map layer selector.

Uncheck the “Classification” overlay.

Once you are satisfied with your forested training data points, move on to the Non-forest training points.

Since we are using a very basic set of land cover classes for this exercise, this should include agricultural areas, water, and buildings and roads. Therefore, it will be important that you focus on collecting a variety of points from different types of land cover throughout the study area.

Water is one of the easiest classes to identify and the easiest to model, due to the distinct spectral signature of water.

Look for bodies of water within Rondonia.

Collect 10-15 data points for Water and be sure to spread them throughout Lake Mai Ndombe, the water sources feeding into it, and a couple of the bodies of water (including rivers) to the eastern side of the mosaic. Be sure to put 2-3 points on rivers.

Some wetland areas may have varying amounts of water throughout the year, so it is important to check both Planet NICFI maps for 2019 (Jun 2019 and Dec 2019).

Let’s now collect some building and road non-forest training data.

There are not many residential areas in the region. However, if you look, you can find homes with dirt roads and some airports.

Place a point or points within these areas and classify them as Non-forest. Do your best to avoid placing the points over areas of the town with lots of trees.

Find some roads, and place points and classify as Non-forest. These may look like areas of bare soil. Both bare soil and roads are classified as Non-forest, so place some points on both.

Next, place several points in grassland/pasture, shrub, and agricultural areas around the study area.

Shrubs or small, non-forest vegetation can sometimes be hard to identify, even with high-resolution imagery. Do your best to find vegetation that is clearly not forest.

The texture of the vegetation is one of the best ways to differentiate between trees and grasses/shrubs. Look at the below image and notice the clear contrast between the area where the points are placed and the other areas in the image that have rougher textures and that create shadows.

Note

If you are using QGIS (or similar) to collect training data, you should also collect Cloud training data in the Non-forest class – if your Landsat has any clouds. If there are some clouds that were not removed during the Landsat mosaic creation process you will need to create training data for the clouds that remain so that the classifier knows what those pixels represent. Sometimes clouds were detected during the mosaic process and were mostly removed. However, you can see that some of the edges of those clouds remain.

Note that you may not have any clouds in your Landsat imagery.

Continue collecting Non-forest points. Again, be sure to spread the points out across the study area.

When you are done collecting data for these categories, zoom out to the full extent of the study region.

Did you place data points somewhat equally across the full region?

Are all points clustered in the same area?

It’s best to make sure you have data points covering the full spatial extent of the study region; add more points in areas that are sparsely represented, if needed.

Classification using machine learning algorithms (Random Forests)#

As mentioned in the module introduction, the classification algorithm you will be using today is called Random forest, which creates numerous decision trees for each pixel. Each of these decision trees votes on what the pixel should be classified as. The land cover class that receives the most votes is then assigned as the map class for that pixel. Random forests are efficient on large data and accurate when compared to other classification algorithms.

To complete the classification of our mosaicked image, you are going to use a random forests classifier contained within the easy-to-use Classification tool in SEPAL. The image values used to train the model include the Landsat mosaic values and some derivatives, if selected (such as NDVI). There are likely additional datasets that can be used to help differentiate land cover classes such as elevation data.

After we create the map, you might find that there are some areas that are not classifying well. The classification process is iterative, and there are ways you can modify the process to get better results. One way is to collect more or better reference data to train the model. You can test different classification algorithms, or explore object-based approaches, opposed to pixel-based approaches. The possibilities are many and should relate back to the nature of the classes you hope to map. Last, but certainly not least, is to improve the quality of your training data. Be sure to log all of these decision points in order to recreate your analysis in the future.

Objective:

Run SEPAL’s classification tool.

Prerequisites:

land cover categories defined in Section 2.1;

mosaic created in Section 2.2; and

training data created in Section 2.3.

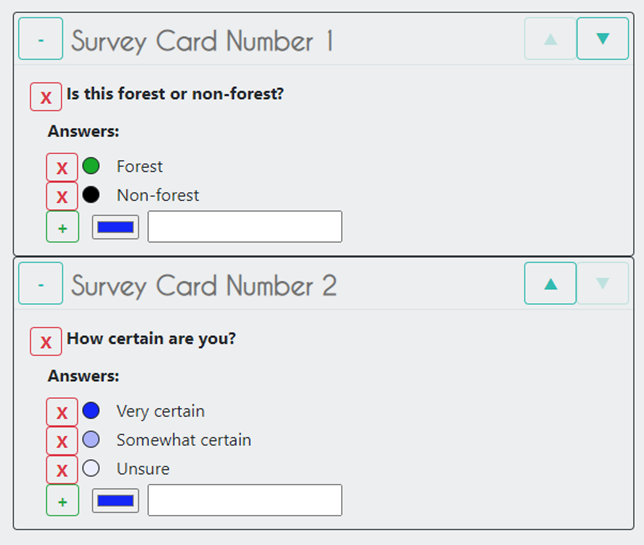

Add training data collected outside of SEPAL#

Note

This section is optional.

If you collected training data using QGIS, CEO, or another pathway, you will need to add the Training Data we collected in Section 2.3 in the TRN tab.

Select the green Add button.

Import your training data - Upload a .csv file. - Select Earth Engine Table and enter the path to your Earth Engine asset in the EE Table ID field.

Select

Next.For Location Type, select X/Y” coordinate columns or GEOJSON Column, depending on your data source. GEE assets will need the GEOJSON column option.

Select

Next.Leave the Row filter expression blank. For Class format, select Single Column or Column per class, as your data dictates.

In the Class Column field, select the column name that is associated with the class.

Select

Next.

Now you will be asked to confirm the link between the legend you entered previously and your classification. You should see a screen as follows. If you need to change anything, select the green plus buttons. Otherwise, select Done, then select Close.

Review additional classification options#

Select AUX to examine the auxiliary data sources available for the classification.

Auxiliary inputs are optional layers which can be added to help aid the classification. There are three additional sources available:

Latitude: includes the latitude of each pixel;

Terrain: includes elevation of each pixel from SRTM data; and

Water: includes information from the JRC Global Surface Water Mapping layers

Select Water and Terrain and then Apply.

Select CLS to examine the classifier being used.

The default is a Random forest with 25 trees.

Other options include classification and regression trees (CART), Naive Bayes, support vector machine (SVM), minimum distance, and decision trees (requires a .csv file).

Additional parameters for each of these can be specified by selecting the More button in the lower left.

For this example, we will use the default, Random forest with 25 trees.

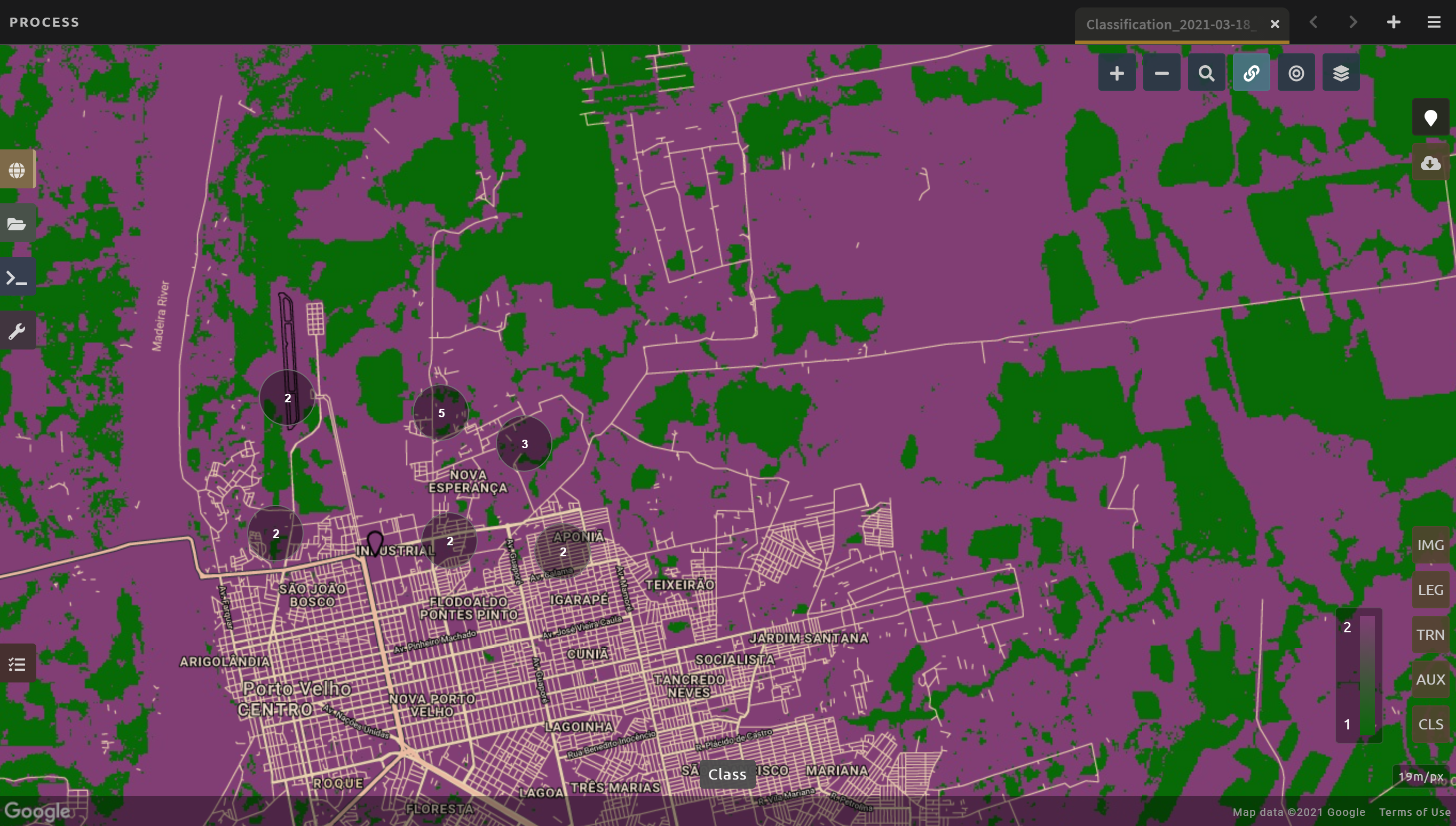

If you turned off your classification preview previously to collect training data, now is the time to turn it back on.

Select the Select layers to show icon.

Select Classification.

Make sure Classification now has a check mark next to it, indicating that the layer is now turned on.

Now we’ll save our classification output.

First, rename your classification by entering a new name in the tab.

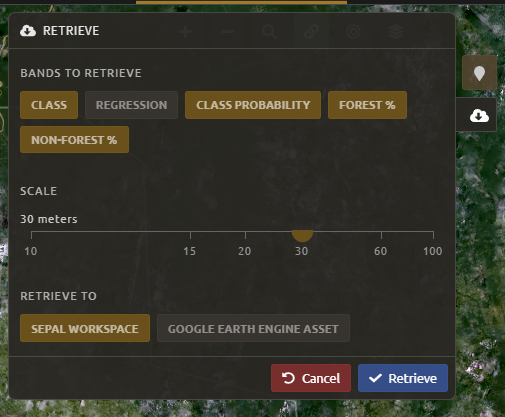

Select

Retrieve classificationin the upper-right hand corner (cloud icon).Choose 30 m resolution.

Select the Class, Class probability, Forest % and Non-forest % bands.

Retrieve to your SEPAL workspace.

Note

You can also choose Google Earth Engine Asset if you would like to be able to share your results or perform additional analysis in GEE; however, with this option, you will need to download your map from GEE using the export function.

Once the download begins, you will see the spinning wheel in the lower-left of the webpage in Tasks. Select the spinning wheel to observe the progress of your export.

When complete, if you chose SEPAL workspace, the file will be in your SEPAL downloads folder. (Browse > downloads > classification name folder). If you chose GEE Asset, the file will be in your GEE Assets.

QA/QC considerations and methods#

Quality assurance and quality control, commonly referred to as QA/QC, is a critical part of any analysis. There are two approaches to QA/QC: formal and informal. Formal QA/QC, specifically sample-based estimates of error and area, are described in Module 4. Informal QA/QC involves qualitative approaches to identifying problems with your analysis and classifications to iterate and create improved classifications.

Here we’ll discuss one approach to informal QA/QC.

Following analysis, you should spend some time looking at your change detection in order to understand if the results make sense. We’ll do this in the classification window. This allows us to visualize the data and collect additional training points if we find areas of poor classification. Other approaches not covered here include visualizing the data in GEE or another program, such as QGIS or ArcMAP.

With SEPAL, you can examine your classification and collect additional training data to improve the classification.

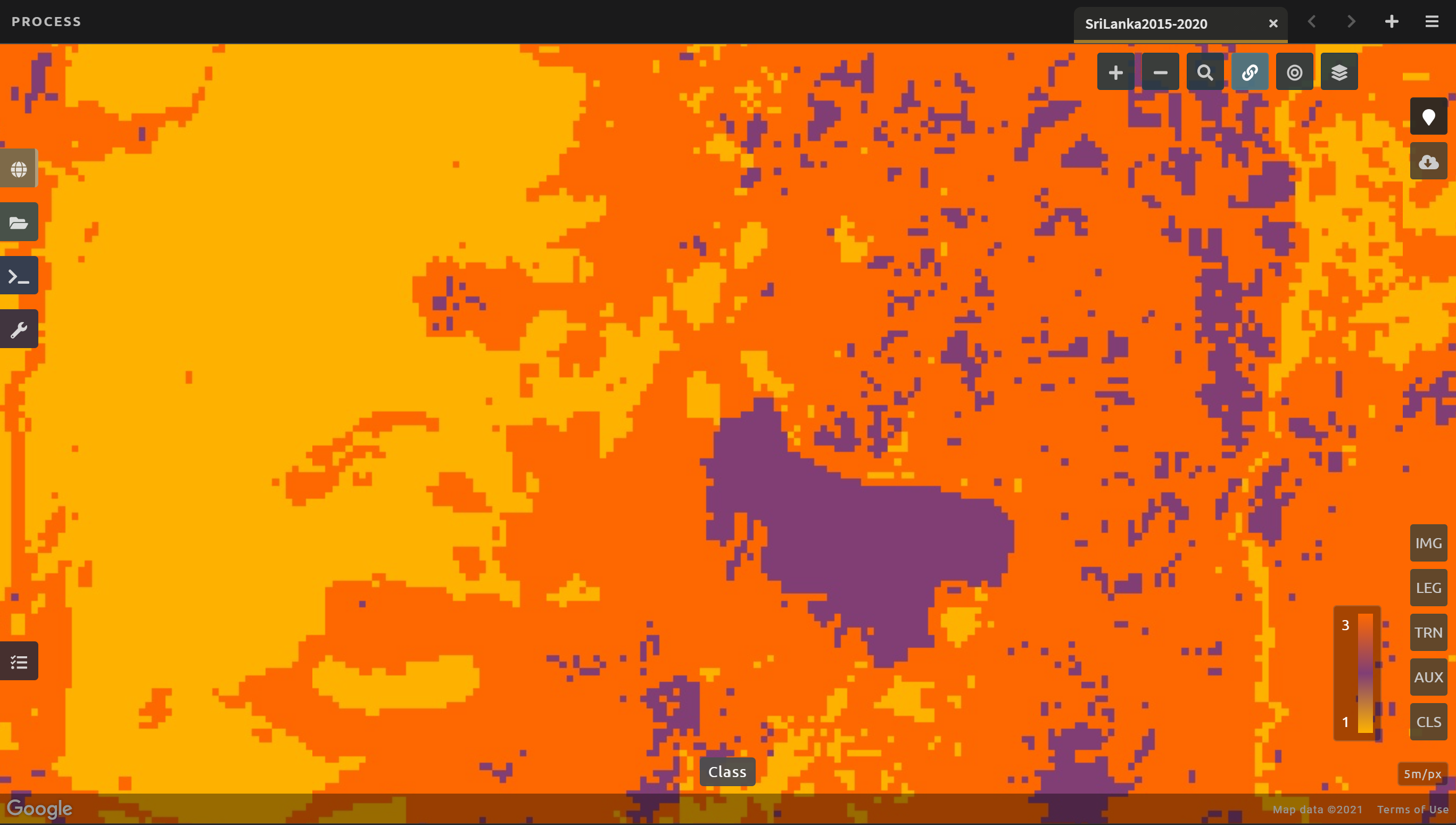

Turn on the imagery for your classification; pan and zoom around the map. Compare your classification map to the 2015 and 2020 imagery. Where do you see areas that are correct? Where do you see areas that are incorrect? If your results make sense, and you are happy with them, continue to formal QA/QC in Module 4.

Note

If you are not satisfied, collect additional points of training data where you see inaccuracies. Then, re-export the classification following the steps in Section 2.3.

Image change detection#

Image change detection allows us to understand differences in the landscape as they appear in satellite images over time. There are many questions that change detection methods can help answer, including: “When did deforestation take place?” and “How much forest area has been converted to agriculture in the past five years?”

Most methods for change detection use algorithms supported by statistical methods to extract and compare information in the satellite images. To conduct change detection, we need multiple mosaics or images, each one representing a point in time.

In this section of SEPAL documentation, we will describe how to detect change between two dates using a simple model (note: this theory can be expanded to include more dates as well). In addition, we’ll describe time series analysis, which generally looks at longer periods of time.

The objective of this module is to become associated with methods of detecting change for an AOI using the SEPAL platform. We will build upon and incorporate what we have covered in the previous modules, including: creating mosaics, creating training samples, and classifying imagery.

This module is split into two exercises: the first addresses change detection using two dates; the second demonstrates more advanced methods using time series analysis with the BFAST algorithm and LandTrendr.

At the end of this module, you will know how to conduct a two-date change detection in SEPAL, have a basic understanding of the BFAST tool in SEPAL, and be familiar with TimeSync and LandTrendr.

This module should take you approximately three hours to complete.

Two-date change detection#

In this exercise, you will learn how to conduct a two-date change detection in SEPAL with the same classification algorithm used in Module 2.

This approach can be used with more than two dates in the future, if needed.

In this example, you will create optical mosaics and classify them, building on skills learned in Module 1 and Module 2.

You may use two classifications from your own research area, if you prefer.

Note

Prerequisites:

Create mosaics for change detection#

Before we can identify change, we first need to have images to compare.

In this section, we will create two mosaics of Sri Lanka, generate training data, and then classify the mosaics. This is discussed in detail in Module 1 and Module 2.

Open the Process menu and select Optical mosaic. Alternatively, select the green plus symbol to open the Create recipe menu; then, select Optical mosaic.

Use the following data:

Choose Sri Lanka for the AOI.

Select 2015 for the date (DAT).

Select Landsat 8 (L8) as the source (SRC).

In the Composite (CMP) menu, ensure that surface reflectance ((SR) correction) is selected, as well as Median as the compositing method.

Select Retrieve mosaic; then select Blue, Green, Red, NIR, SWIR1, SWIR2. Lastly, select Google Earth Engine Asset and Retrieve.

Note

If you don’t see the Google Earth Engine Asset option, you need to connect your Google account to SEPAL by selecting your username in the lower right.

Repeat previous steps, but change the Date parameter to 2020.

Note

It may take a significant amount of time before your mosaics finish exporting.

Start the classification#

Now we will begin the classification, as we did in Module 2. There are multiple pathways for collecting training data. To create a layer of points, using desktop GIS, including QGIS and ArcGIS, is one common approach. Using GEE is another approach. You can also use CEO to create a project of random points to identify (see detailed directions in Section 4.1.2). All of these pathways will create a .csv file or a GEE table that you can import into SEPAL to use as your training data set.

SEPAL has a built-in reference data collection tool in the classifier. This is the tool you used in Module 2, and we will again use this tool to collect training data. Even if you use a .csv file or GEE table in the future, this is a helpful feature to collect additional training data points to help refine your model.

In the Process menu, select the green plus symbol and select Classification.

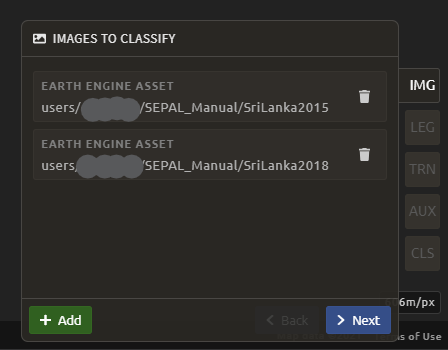

Add the two Sri Lanka optical mosaics for classification by selecting + Add and choose either Saved SEPAL Recipe or Earth Engine Asset (recommended).

If you choose Saved SEPAL Recipe, simply select your Module 2 Amazon recipe.

If you choose Earth Engine Asset, enter the Earth Engine Asset ID for the mosaic. The ID should look like “users/username/SriLanka2015”.

Tip

Remember that you can find the link to your Earth Engine Asset ID via GEE’s Asset tab (see Exercise 2.2 Part 2).

Select bands: Blue, Green, Red, NIR, SWIR1, and SWIR2. You can add other bands as well, if you included them in your mosaic. You can also include Derived bands by selecting the green button in the lower left and selecting Apply.

Repeat the previous steps for your 2020 optical mosaic.

Attention

Selecting Saved SEPAL recipe may cause the following error at the final stage of your classification:

Google Earth Engine error: Failed to create preview.

This occurs because GEE gets overloaded. If you encounter this error, please retrieve your classification as described in section 2.2.

Collect change classification training data#

Now that we have the mosaics created, we will collect change training data. While more complex systems can be used, we will consider two land cover classes that each pixel can be in 2015 or 2020: forest and non-forest. Thinking about change detection, we will use three options: stable forest, stable non-forest, and change. That is, between 2015 and 2020, there are four pathways: a pixel can be forest in 2015 and in 2020 (stable forest); a pixel can be non-forest in 2015 and in 2020 (stable non-forest); or it can change from forest to non-forest or from non-forest to forest. If you use this manual to guide your own change classification, remember to log your decisions including how you are thinking about change detection (what classes can change and how), and the imagery and other settings used for your classification.

![digraph G {

rankdir=LR;

subgraph cluster0 {

node [style=filled, shape=box];

a0 [label="Non-forest", color=lightgrey];

a1 [label="Forest", color=darkgreen];

label = "2015";

}

subgraph cluster1 {

node [style=filled, shape=box];

b0 [label="Non-forest", color=lightgrey];

b1 [label="Forest", color=darkgreen];

label = "2018";

}

a0 -> b0 [color=grey];

a1 -> b1 [color=darkgreen];

a1 -> b0 [color=orange];

a0 -> b1 [color=orange];

}](../_images/graphviz-6cfd6921b4e1bc1e1af4c96888889efdf86827dc.png)

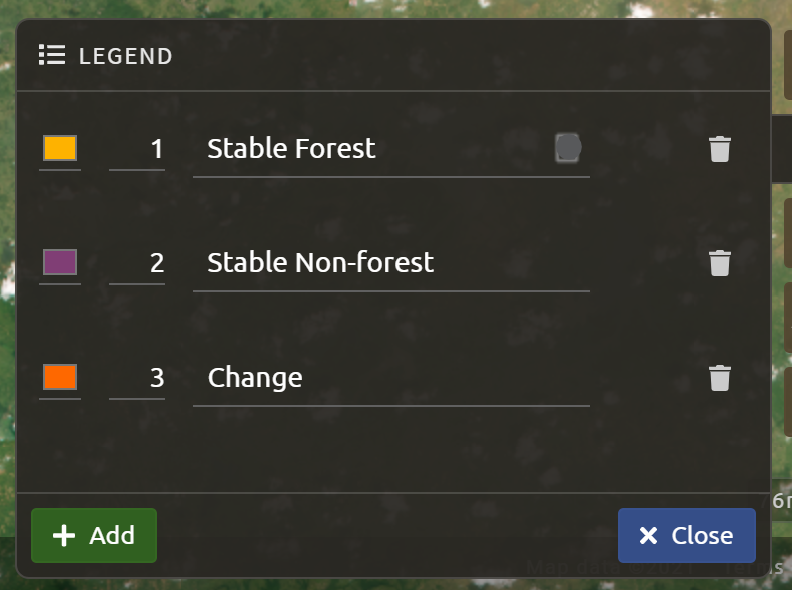

In the Legend menu, select + Add. This will add a place for you to write your first class label. You will need three legend entries:

The first should have the number 1 and a class label of Forest.

The second should have the number 2 and a class label of Non-forest.

The third should have the number 3 and a class label of Change.

Choose colors for each class as you see fit and select Close.

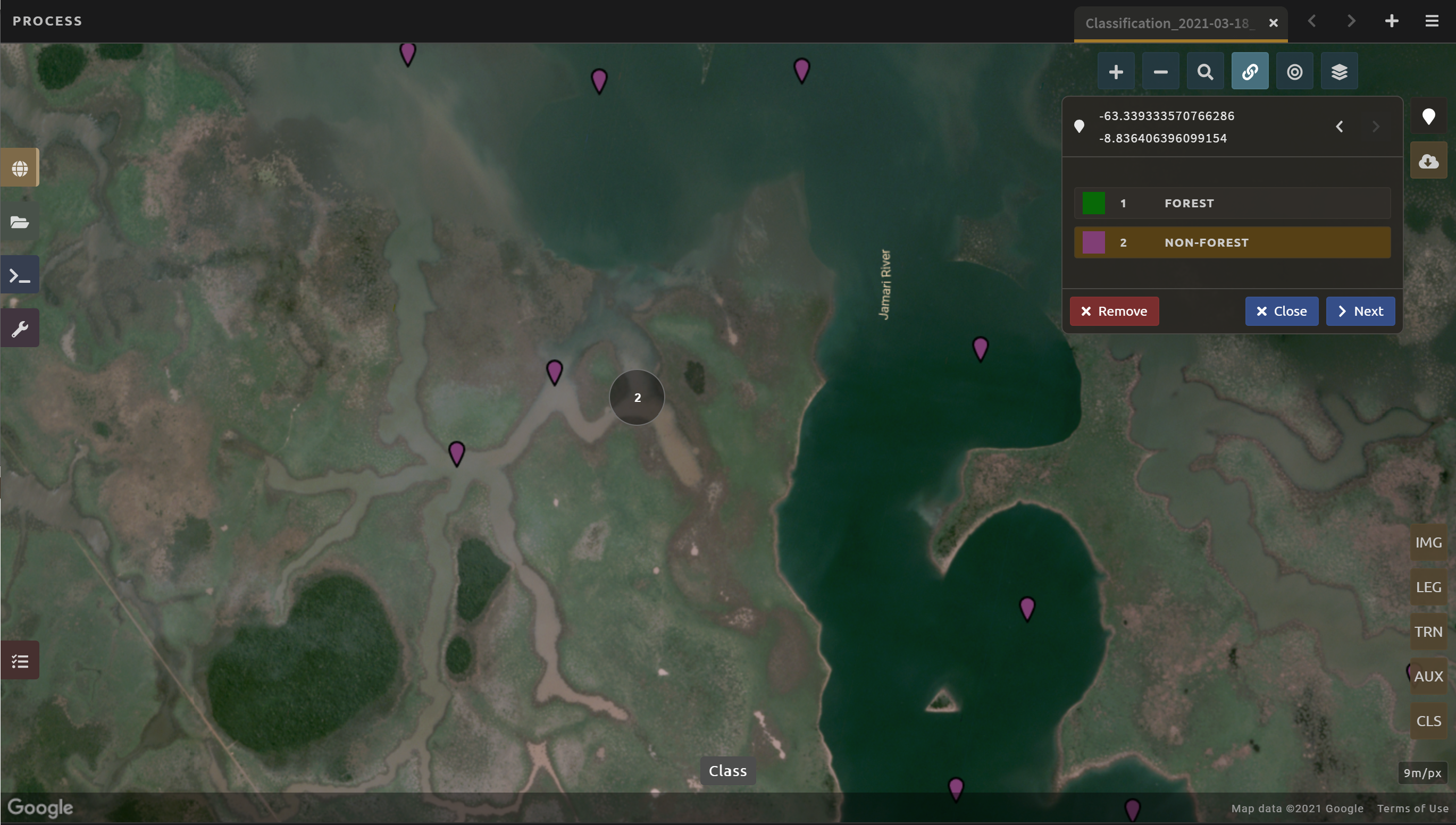

Now, we’ll create training data. First, let’s pull up the correct imagery. Choose Select layers to view. As a reminder, available base layers include: - SEPAL (Minimal dark SEPAL default layer) - Google Satellite - Planet NICFI composites

We will use the Planet NICFI composites for this example. The composites are available in either RGB or false color infrared (CIR). Composites are available monthly after September 2020 and for every six months prior through 2015. Select Dec 2015 (six months). Both RGB and CIR will be useful, so choose whichever you prefer. You can also select Show labels to enable labels that can help you orient yourself in the landscape. You will need to switch between this Dec 2015 data and the Dec 2020 data to find stable areas and changed areas.

Note

If you have collected data in QGIS, CEO or another program, you can skip the following steps. Simply select TRN in the lower right. Select + Add, then upload your data to SEPAL. Finally, select the CLS button in the lower right and you can skip to Section 3.1.4

Now select the point icon. When you hover over this icon, it says Enable reference data collection.

With reference data collection enabled, you can start adding points to your map.

Use the scroll wheel on your mouse to zoom in on the study area. You can drag to pan around the map. Be careful though, as a single click will place a point on the map.

Tip

If you accidentally add a point, you can delete it by selecting the red Remove button.

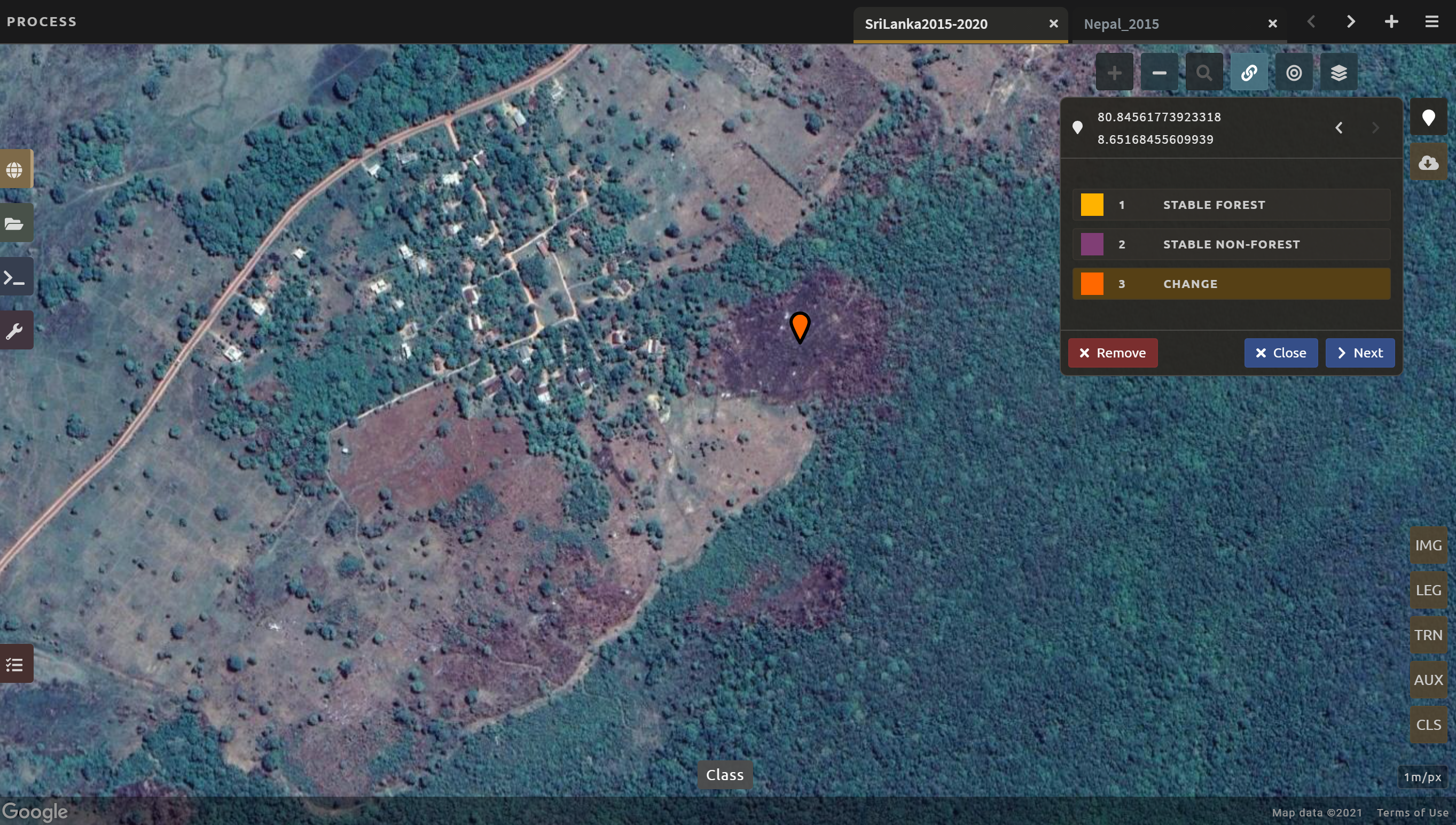

Collect training data for the Stable forest class. Place points where there is forest in both 2015 and 2020 imagery. Then collect training data for the Stable Non-forest class. Place points where there is not forest in either 2015 or 2020. You should include water, built-up areas, bare dirt, and agricultural areas in your points. Finally collect training data for the Change class.

Tip

If you are having a hard time finding areas of change, several tools can help you:

You can use Google satellite imagery to help. Areas of forest loss often appear as black or dark purple patches on the landscape. Be sure to always check the 2015 and 2020 Planet imagery to verify Change.

The CIR (false color infrared) imagery from Planet can also be helpful in identifying areas of change.

- You can also use SEPAL’s on-the-fly classification to help after collecting a few Change points.

If the classification does not appear after collecting the Stable forest and Stable non-forest classes, select the “Select layers to view” icon.

Toggle the Classification option off, and then on again.

You may need to select CLS on the lower right of the screen, then select Close to get the classification map to appear.

With the classification map created, you can find change pixels and confirm whether they are change or not by comparing 2015 and 2020 imagery.

One trick for determining change is to place a Change point in an area of suspected change. Then you can compare 2015 and 2020 imagery without losing the place you were looking at. If it is not change, you can switch which classification you have identified the point as.

Continue collecting points until you have approximately 25 points for Forest and Non-forest classes and about 5 points for the Change class. More is better. Try to have your points spread out across Sri Lanka.

If you need to modify the classification of any of your data points, you can select the point to return to the classification options. You can also remove the point in this way.

When you are happy with your data points, select the AUX button in the lower right. Select Terrain and Water. This will add auxiliary data to the classification.

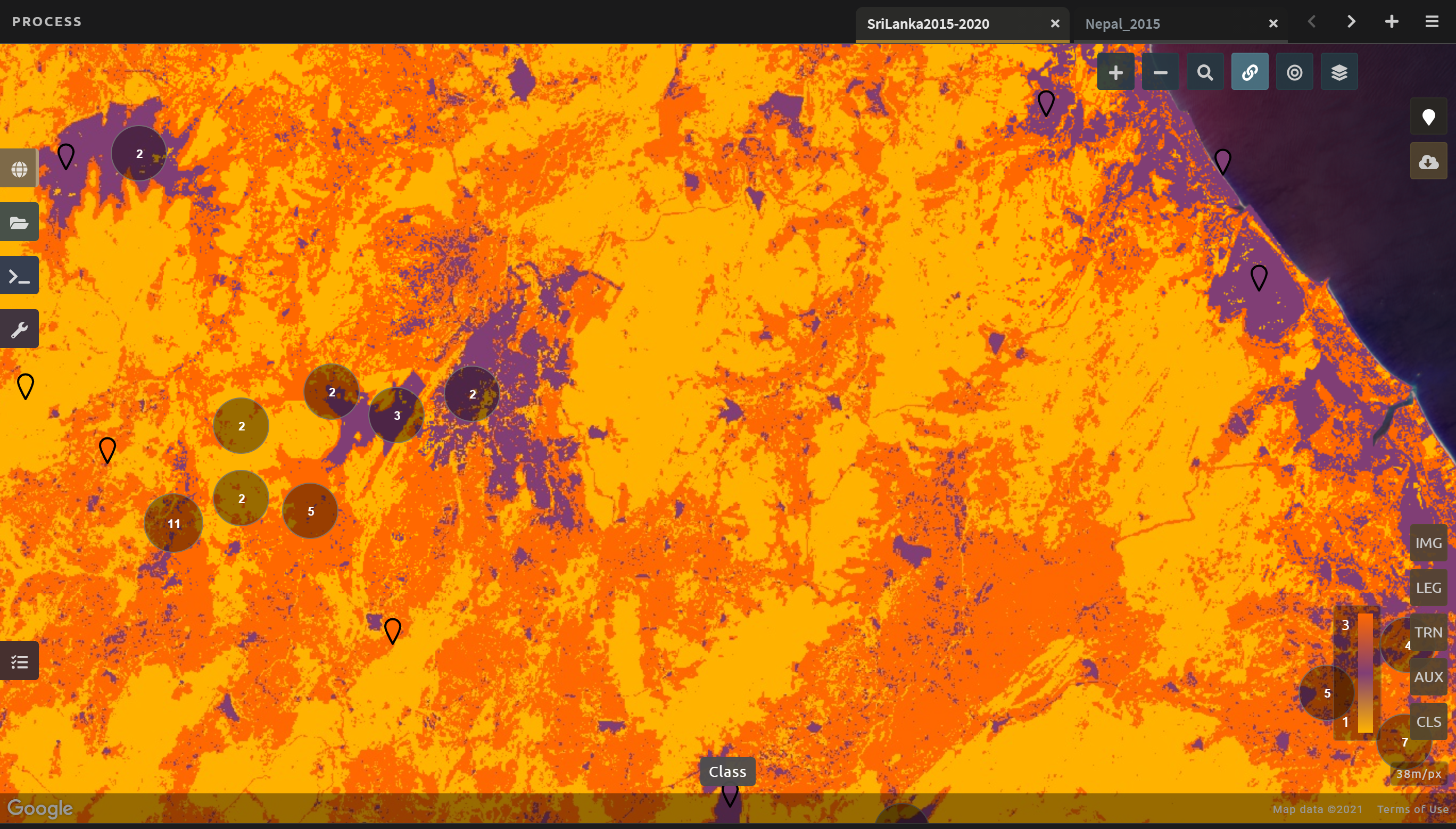

Finally select the CLS button in the lower right. You can change your classification type to see how the output changes. If it has not already, SEPAL will now load a preview of your classification.

Two-date classification retrieval#

Now that the hard work of setting up the mosaics and creating and adding the training data is complete, all that is left to do is retrieve the classification.

To retrieve your classification, select the cloud icon in the upper right to open the Retrieve pane.

Select Google Earth Engine Asset if you would like to share your map or if you would like to use it for further analysis.

Select SEPAL workspace if you would like to use the map internally only.

Then, use the following parameters:

Resolution: 30 m resolution

Selected bands: the Class, Class probability, Forest % and Non-forest % bands.

Finally, select Retrieve.

Quality assurance and quality control#

Quality assurance and quality control (QA/QC) is a critical part of any analysis. There are two approaches to QA/QC: formal and informal. Formal QA/QC, specifically sample-based estimates of error and area are described in Module 4. Informal QA/QC involves qualitative approaches to identifying problems with your analysis and classifications to iterate and create improved classifications. Here we’ll discuss one approach to informal QA/QC.

Following analysis, you should spend some time looking at your change detection in order to understand if the results make sense. This allows us to visualize the data and collect additional training points if we find areas of poor classification. Other approaches not covered here include visualizing the data in GEE or in another program, such as QGIS or ArcMAP.

With SEPAL, you can examine your classification and collect additional training data to improve the classification.

Turn on the imagery for your classification; pan and zoom around the map.

Compare your classification map to the 2015 and 2020 imagery. Where do you see areas that are correct? Where do you see areas that are incorrect?

If your results make sense and you are happy with them, continue to formal QA/QC in Module 4.

Note

If you are not satisfied, collect additional points of training data where you see inaccuracies. Then re-export the classification following the steps in Section 3.1.3.

Deforest tool#

The DEnse FOREst Time Series (deforest) tool is a method for detecting changes in forest cover in a time series of Earth observation data. As input, it takes a time series of forest probability measurements, producing a map of deforestation and an “early warning” map of unconfirmed changes. The method is based on the “Baysian time series” approach of Reiche et al. (2018).

The tool was designed as part of the Satellite Monitoring for Forest Management (SMFM) project, which aimed to address global challenges relating to the monitoring of tropical dry forest ecosystems. It was conducted in partnership with teams in Mozambique, Namibia and Zambia (for more information, see https://www.smfm-project.com).

Full documentation is hosted at http://deforest.rtfd.io

This module should take you approximately one to two hours to complete.

Data preparation#

For this exercise, we will be using the sample data that is included with the tool. Additionally, instructions are given on how to create a time series of forest probability using tools with the SEPAL platform.

Objectives |

Prerequisites |

|---|---|

Learn how to use the SMFM Deforest tool |

SEPAL account |

Completed SEPAL modules on mosaics, classification and time series |

Jupyter notebook basics (optional)#

If you are unfamiliar with Jupyter notebooks, this section is meant to get you acquainted enough with the system to successfully run the SMFM Deforest tool. A notebook is significantly different than most SEPAL applications, but they are a powerful tool used in data science and other disciplines.

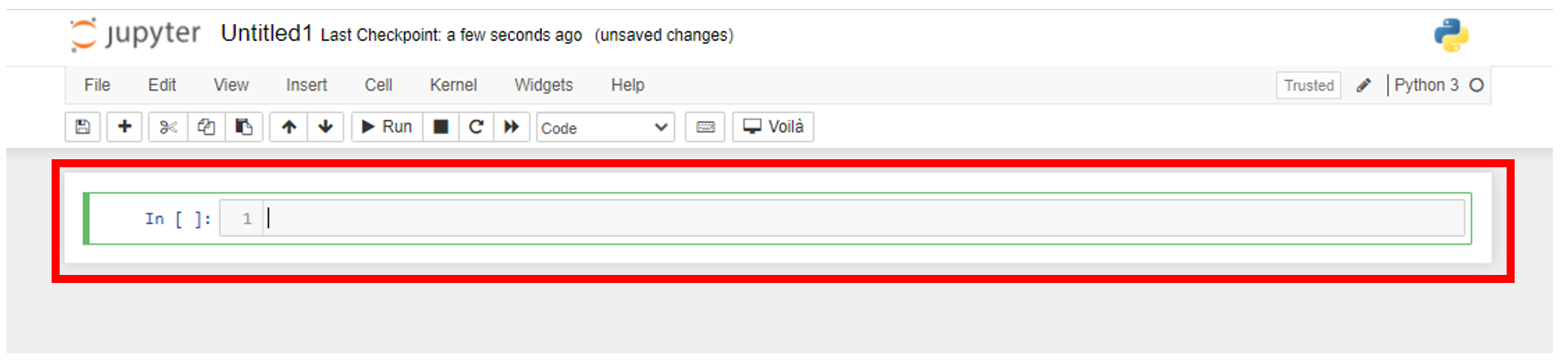

Cells

Every notebook is broken into cells. Cells can come in a few formats, but typically they will be either markdown or code. Markdown cells are the descriptive text and images that accompany the code to help a user understand the context and what the code is doing. Conversely, code cells run code or a system operation. There are many different languages which can be used in a Jupyter notebook. For this tool, we will be using Python.

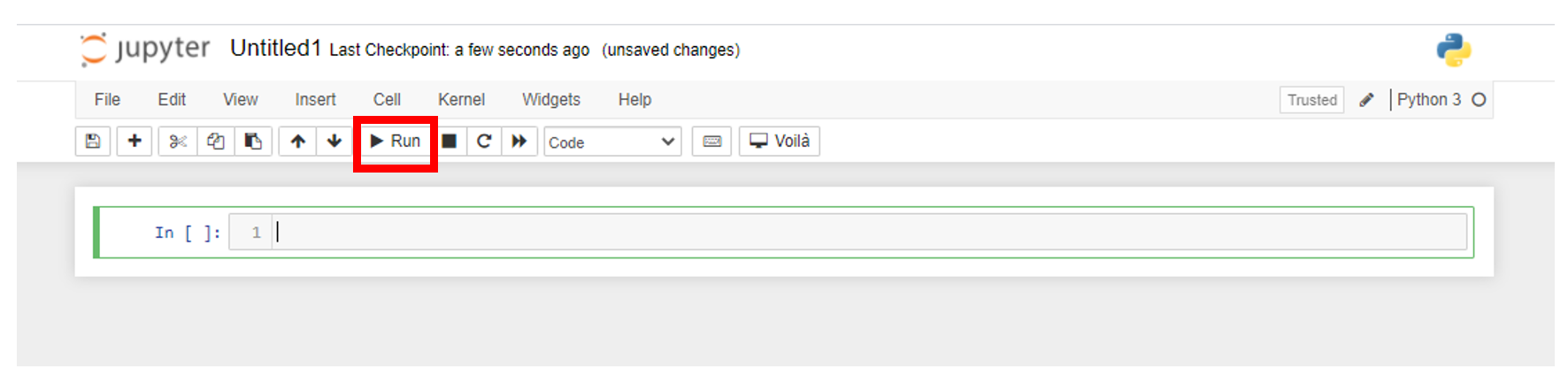

Running cells

To run a cell, select the cell, then locate and select the Run button in the upper menu. You can run a cell more quickly using the keyboard shortcut shift-enter.

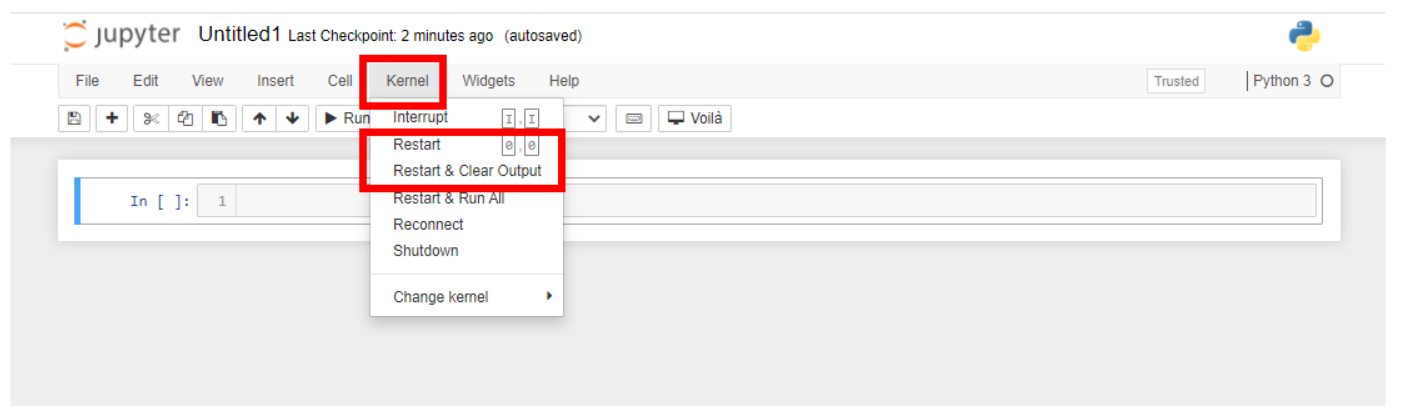

Kernel

The kernel is the computation engine that executes the code in the Jupyter notebook. In this case, it is a Python 3 kernel. For this tutorial, you do not need to know much about this, but if the notebook freezes or you need to reset it for any reason, you can find kernel operations in the toolbar menu.

Restarting the kernel:

Go to the toolbar at the top of the notebook and select Kernel.

From the dropdown menu, select Restart Kernel and clear outputs.

Preparing your data#

For this exercise, we will be using the sample data that is included with the tool. Additionally, instructions are given on how to create a time series of forest probability using tools with the SEPAL platform.

Attention

SMFM Deforest is still in the process of being adapted for use on SEPAL. The forest probability time series will be derived from existing methods to produce a satellite time series implemented on SEPAL.

This tutorial will use the demo data that is packaged with the SMFM Deforest tool, but steps are presented on how to use the current SEPAL implementation with the tool. Note that the data preparation steps in SEPAL can take many hours to complete. If you are unfamiliar with any of the preparations steps, please consult the relevant modules.

If you already have a time series of percent forest coverage, feel free to use that.

Download demo data.

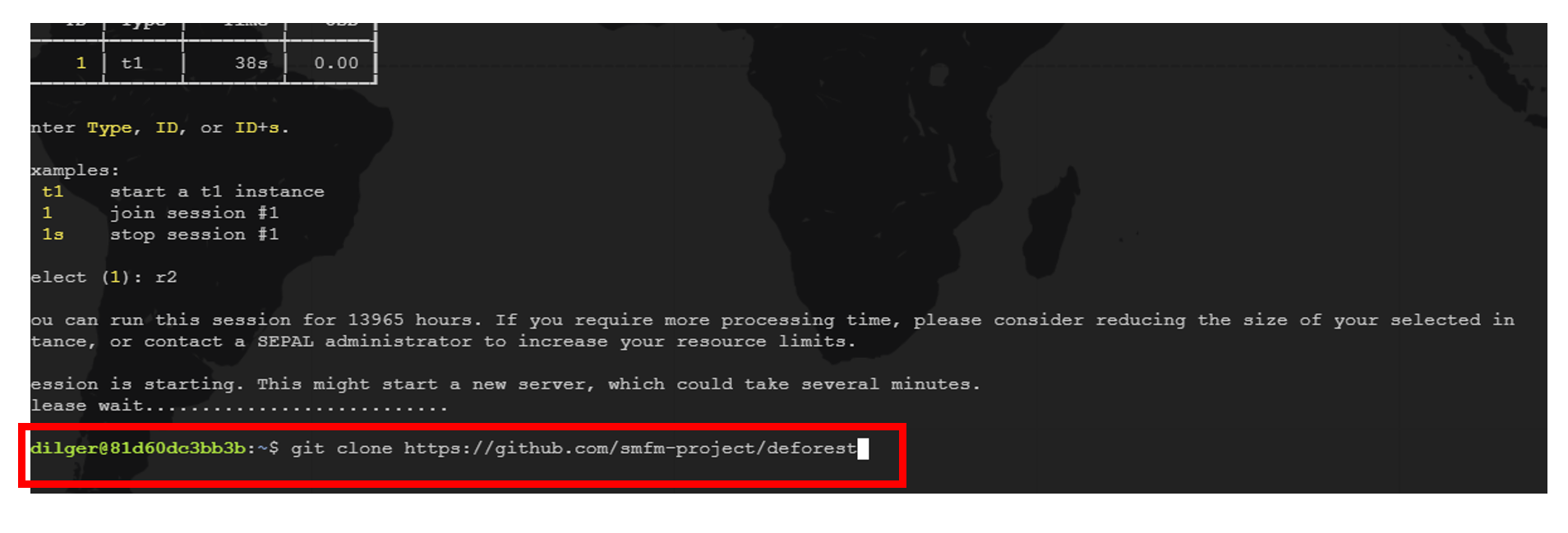

Go to the SEPAL Terminal.

Start a new instance or join your current instance.

Clone the deforest GitHub repository to your SEPAL account using the following command.

` git clone https://github.com/smfm-project/deforest `Use the SEPAL workflow to generate time series of forest probability images.

Create an optical mosaic for your AOI using the Process tab and selecting Optical mosaic. If this is unfamiliar to you, please see the tutorials on OpenMRV under the process, “Mosaic generation with SEPAL”.

Save the mosaic as a recipe.

Open a new classification and point to the Optical mosaic recipe as the image to classify. Use the Process tab and select the Classification process. If this is unfamiliar to you, please see the tutorials on OpenMRV under the process, “Classification”.

Select the bands you want to include in the classification.

Add forest/non-forest training data.

Sample points directly in SEPAL.

Optionally, use Earth Engine asset.

Apply the classifier.

Select the %forest output.

Save the classification as a recipe.

Open a new time series.

Select the same AOI as your mosaic.

Choose a date range for the time series.

In the ‘SRC’ box, select satellites you used in the previous steps and the classification to apply.

Download the time series to your SEPAL workspace.

Note

It will take many hours to download the classified time series to your account, depending on how large your AOI is.

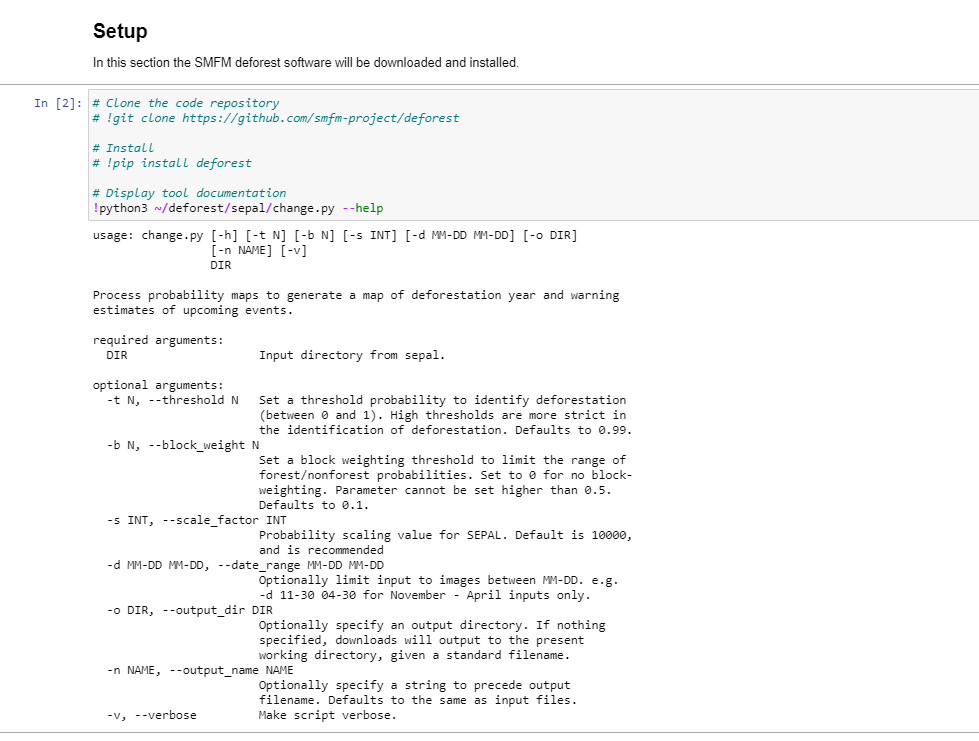

Setup#

Go to the Apps menu by selecting the wrench icon and typing “SMFM” into the search field. Select “SMFM Deforest”.

Note

Sometimes the tool takes a few minutes to load. Wait until you see the tool’s interface. In case the tool fails to load properly, close the tab and repeat the steps above. If this does not work, reload SEPAL.

Click and run the first cell under the Setup header. This cell runs two commands: the first installs the deforest Python module and the second runs the –help switch to display some documentation on running the tool.

If the help text is output beneath the cell, move onto Step 3. If there is an error, continue to Step 2. The error message might say:

` python3: can't open file '/home/username/deforest/sepal/change.py': [Errno 2] No such file or directory `

Successful setup.#

Install the package via the SEPAL Terminal.

Go to your SEPAL Terminal.

Enter 1 to access the terminal of Session 1. You can think of a session as an instance of a virtual machine that is connected to your SEPAL account.

Clone the Deforest GitHub repository to your SEPAL account.

git clone https://github.com/smfm-project/deforestReturn to the SMFM notebook and repeat Step 1.

Once you have successfully set up the tool, take a moment to read through the help document of the Deforest tool that is output below the Jupyter notebook cell you just ran. In the next part, we will explain in more detail some of the parameters.

Process the time series#

Processing the time series imagery can be done with a single line of code using the Deforest change.py command line interface.

To use the demo imagery, you do not need to change any of the inputs. However, if you are using a custom time series you will need to make some modifications. To change the command to point to a custom time series of percent forest images you will need to update the path to your time series.

Original:

!python3 ~/deforest/sepal/change.py ~/deforest/sepal/example_data/Time_series_2021-03-24_10-53-03/0/ -o ~/ -n sampleOutput -d 12-01 04-30 -t 0.999 -s 6000 -v

Example path to time series updated:

!python3 ~/deforest/sepal/change.py ~/downloads/PATH_TO_TIME_SERIES/0/ -o ~/ -n sampleOutputT -d 12-01 01-08 -t 0.999 -s 6000 -v

Note

By default, the time series should be downloaded to a Downloads folder in your home directory and should have another folder in it named 0.

Parameters

Name |

Switch |

Description |

|---|---|---|

Output location |

-o |

output location where images will be saved to SEPAL account |

Output name |

-n |

Output file name prefix |

Date range |

-d |

A date-range filter. Dates need to be formatted as ‘-d MM-DD MM-DD’ |

Threshold |

-t |

Set a threshold probability to identify deforestation (between 0 and 1). High thresholds are more strict in the identification of deforestation. Defaults to 0.99. |

Scale |

-s |

Scale inputs by a factor of 6000. In a full-scale run, this should be set to 10000; here it’s used to correct an inadequate classification. |

Verbose |

-v |

Prints information to the console as the tool is run. |

If you would like to use a time frame other than the example, update the date range switch.

Run the Process the time series cell.

By default, the tool is set to use verbose (-v) output. With this option, as each image is processed, a message will be printed to inform us of the progress.

- This cell runs two commands:

The first line is running the SMFM Deforest change detection algorithm (change.py).

After processing the images, we print them out to ensure the program runs successfully.

Note

The exclamation mark (!) is used to run commands using the underlying operating system. When we run !ls in the notebook, it is the same as running ls in the terminal.

The output deforestation image will be saved to the home directory of SEPAL account (home/username) by default. If you want to save your images in a different location it can be changed by adding the new path after the -o switch.

Download outputs to local computer (optional).

Navigate to the Files section of your SEPAL account.

Locate the output image to download and click to select it. In this case, the image is named sampleOutput_confirmed.

Select the download icon.

Data visualization#

Now that we have run the deforestation processing chain, we can visualize our output maps. The outputs of the SMFM tool are two images: Confirmed and Warning. We will look at the confirmed image first.

Run the first Data visualization cell of the Jupyter notebook.

If you changed the name of your output file, be sure to update the path on Line 8 for the variable confirmed.

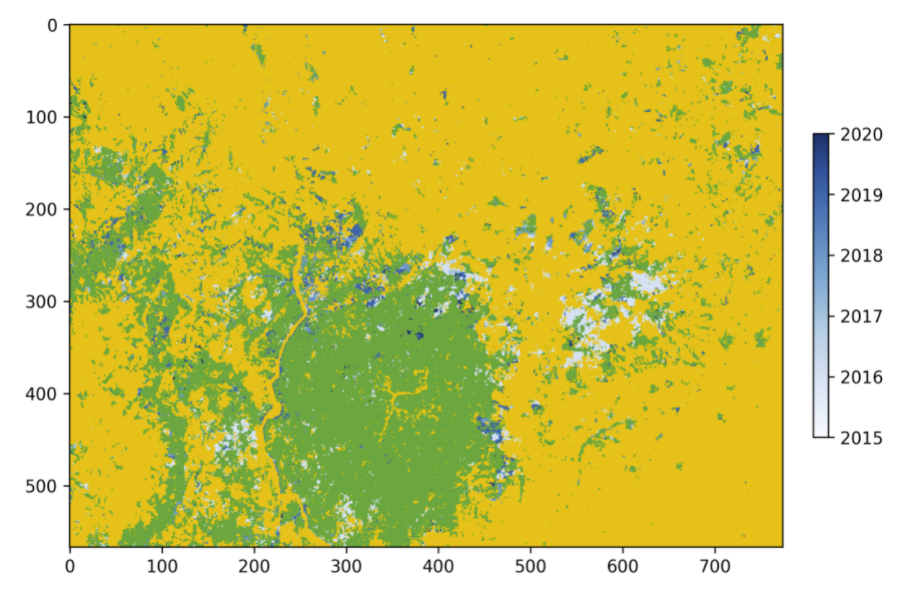

The confirmed image shows the years of change that have been detected in the time series. Stable forest is colored green, non-forest is colored yellow, and the change years colored by a blue gradient.

It is recommended that the user discards the first two to three years of change, or uses a very high-quality forest baseline map to mask out locations that weren’t forest at the start of the time series. This is needed since our input imagery is a forest probability time series which initially considers the landscape as forest.

Next, we will check out the deforest warning output.

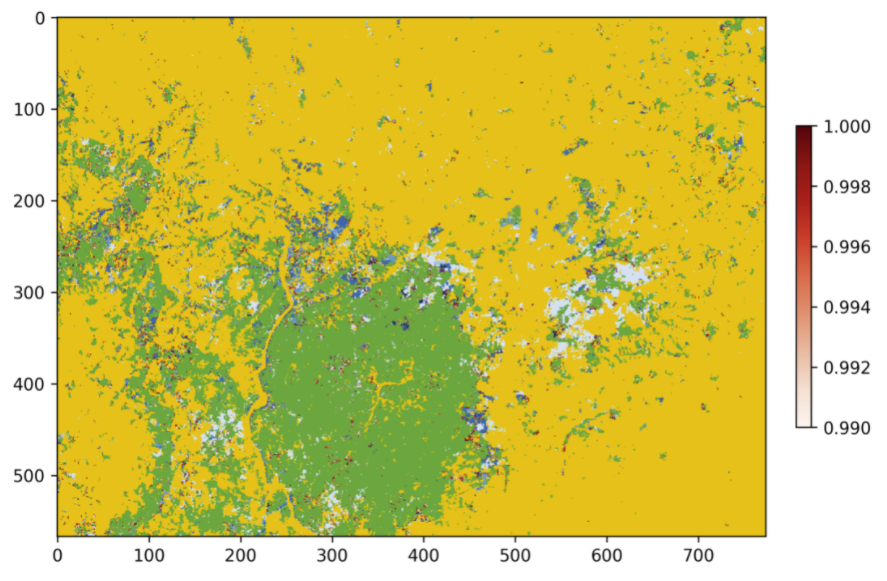

Run the second Data visualization cell.

This image shows the combined probability of non-forest existing at the end of our time series in locations that have not yet been flagged as deforested. This can be used to provide information on locations that have not yet reached the threshold for confirmed changes, but are looking likely to be possible.

You can view a demonstration of the above steps in this video.

Additional resources#

Source code: The source code of the Deforest tool and Jupyter notebook can be found in the GitHub repository.

Bug report: in case you notice a bug or have issues using the tool, you can report an issue using the Issues section of the Github repository.

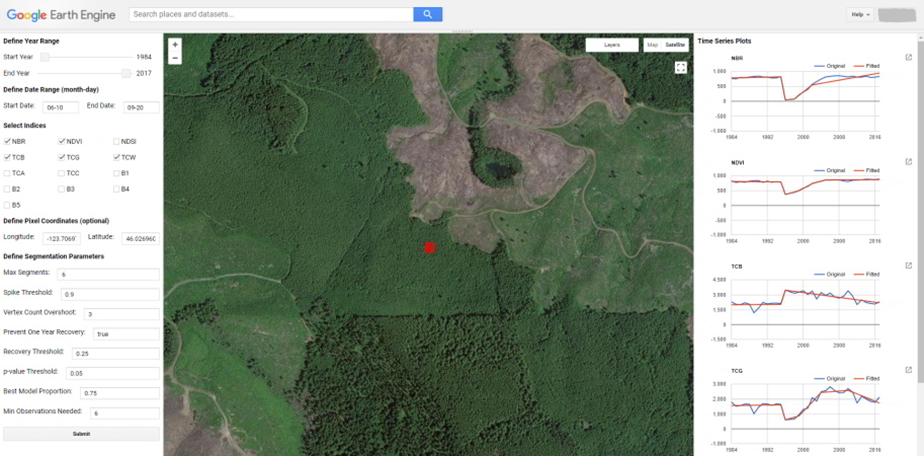

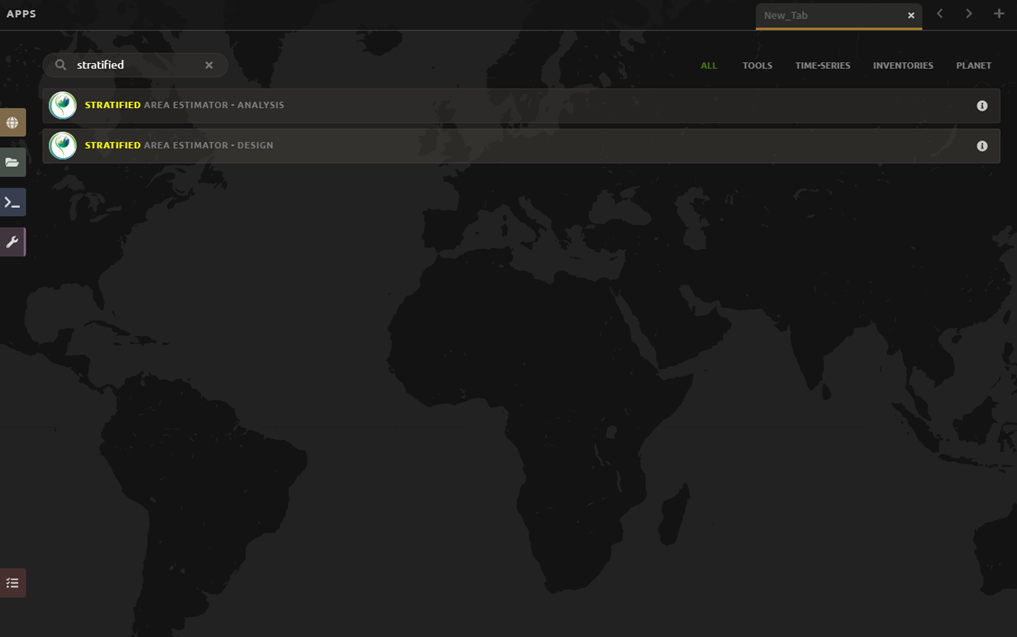

Other approaches to time series analysis#

In this exercise, you will learn more about time series analysis. SEPAL has the BFAST option, described first. We also provide information on TimeSync and LandTrendr, products currently only available outside of SEPAL and CEO.

TimeSync integration is coming to CEO in 2021.

Objectives:

learn the basics of BFAST explorer in SEPAL; and

learn about time series analysis options outside of SEPAL.

Prerequisite:

SEPAL account

BFAST Explorer#

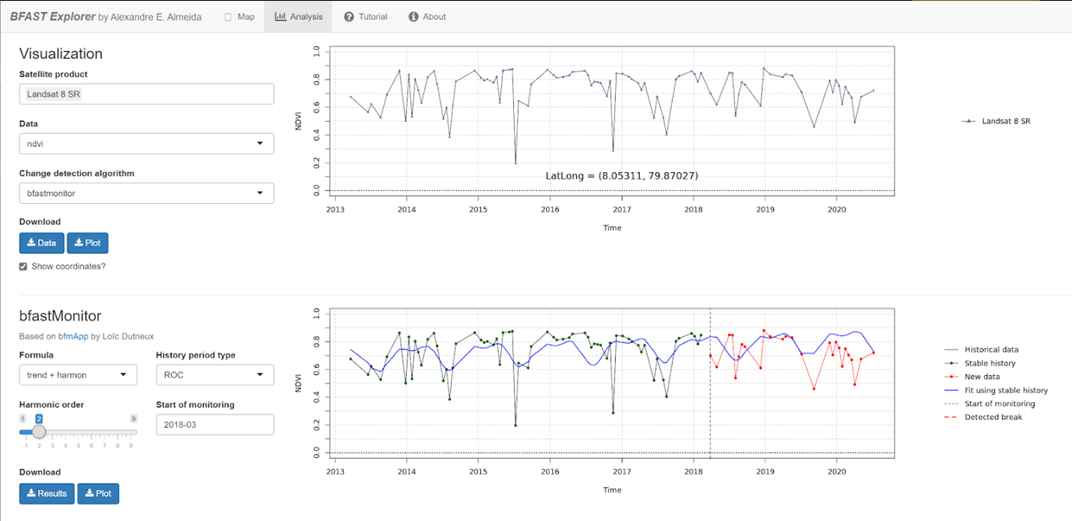

Breaks For Additive Seasonal and Trend (BFAST) is a change detection algorithm for time series which detects and characterizes changes. BFAST integrates the decomposition of time series into trend, seasonal, and remainder components with methods for detecting change within time series. BFAST iteratively estimates the time and number of changes, and characterizes change by its magnitude and direction (Verbesselt et al., 2009).

BFAST Explorer is a Shiny app, developed using R and Python, designed for the analysis of Landsat surface reflectance (SR) time series pixel data. Three change detection algorithms - bfastmonitor, bfast01 and bfast - are used in order to investigate temporal changes in trend and seasonal components via breakpoint detection.

More information can be found online at http://bfast.r-forge.r-project.org. If you encounter any bugs, please send a message to or create an issue on the GitHub page.

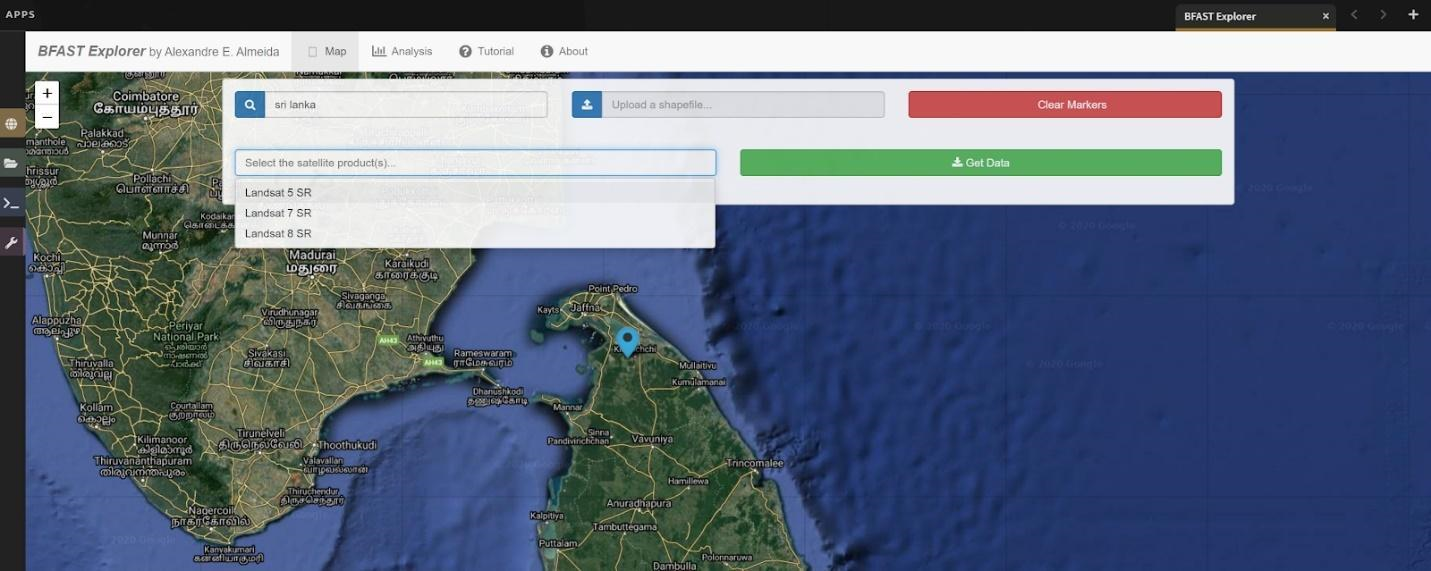

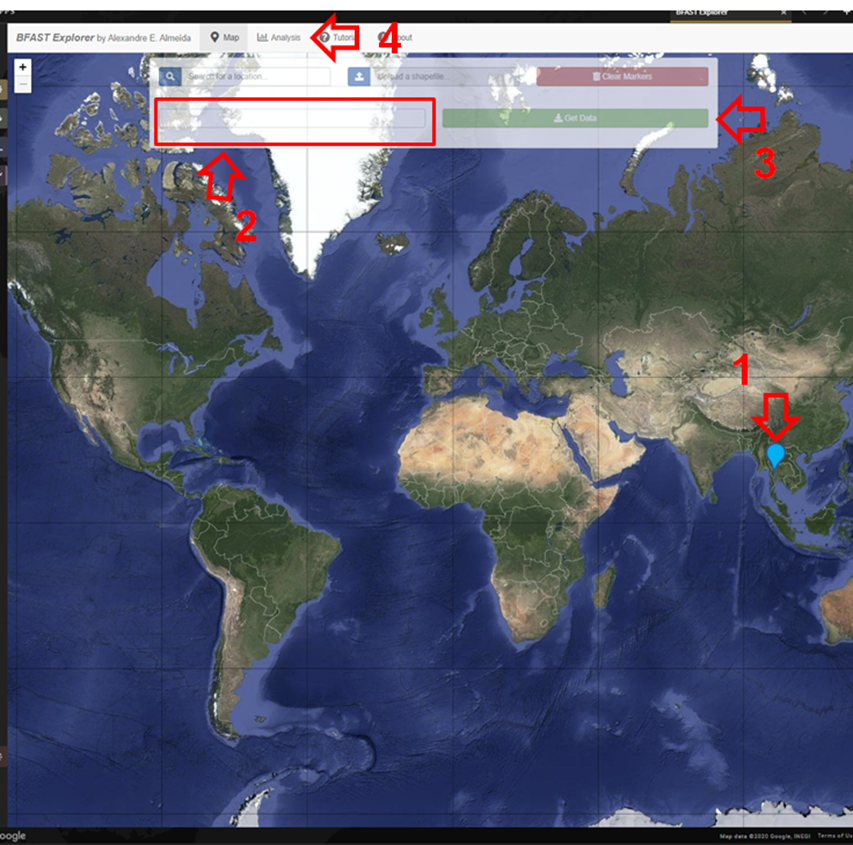

Go to the Apps menu by selecting the wrench icon. Then, enter “BFAST” into the search field and select BFAST Explorer.

Find a location on the map that you would like to run BFAST on. Select a location to drop a marker, and then click the marker to select it. Select Landsat 8 SR from the Select satellite products dropdown menu. Select Get Data (note: it may take a moment to download all of the data for the point).

Select the Analysis button (at the top next to the Map button).

Satellite product: Add your satellite data by selecting them from the Satellite products dropdown menu.

Data: The data to apply the BFAST algorithm to and plot. There are options for each band available as well as indices, such as NDVI, EVI, and NDMI. Select ndvi.

Change detection algorithm: Holds three options of BFAST to calculate for the data series.

Bfastmonitor: Monitoring the first break at the end of the time series.

Bfast01: Checking for one major break in the time series.

Bfast: Time series decomposition and multiple breakpoint detection in trend and seasonal components.

Each BFAST algorithm methodology has characteristics which affect when and why you may choose one over the other. For instance, if the goal of an analysis is to monitor when the last time change occurred in a forest, then Bfastmonitor would be an appropriate choice. Bfast01 may be a good selection when trying to identify if a large disturbance event has occurred, and the full Bfast algorithm may be a good choice if there are multiple times in the time series when change has occurred.

Select bfastmonitor as the algorithm.

You can explore different bands, such as spectral bands like b1, along with the different algorithms.

You can download all the time series data by selecting the blue Data button. All the data will be downloaded as a .csv file, ordered by the acquisition date.

You can also download the time series plot as an image by selecting the blue Plot button. A window will appear offering some raster (.jpeg, .png) and a vectorial (.svg) image output formats.

Note

The black and white flashing is normal.

TimeSync and LandTrendr#

Here we will briefly review TimeSync and LandTrendr, two options available outside of SEPAL that may be useful to you in the future. It is outside of the scope of this manual to cover them in detail, but if you’re interested in learning more we’ve provided links to additional resources.

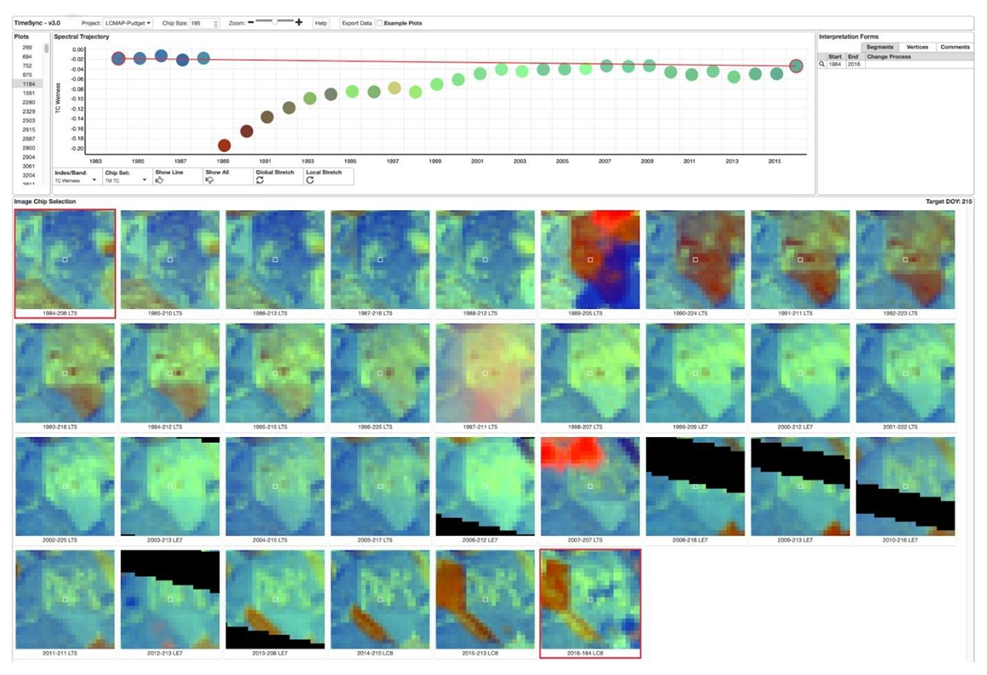

TimeSync#

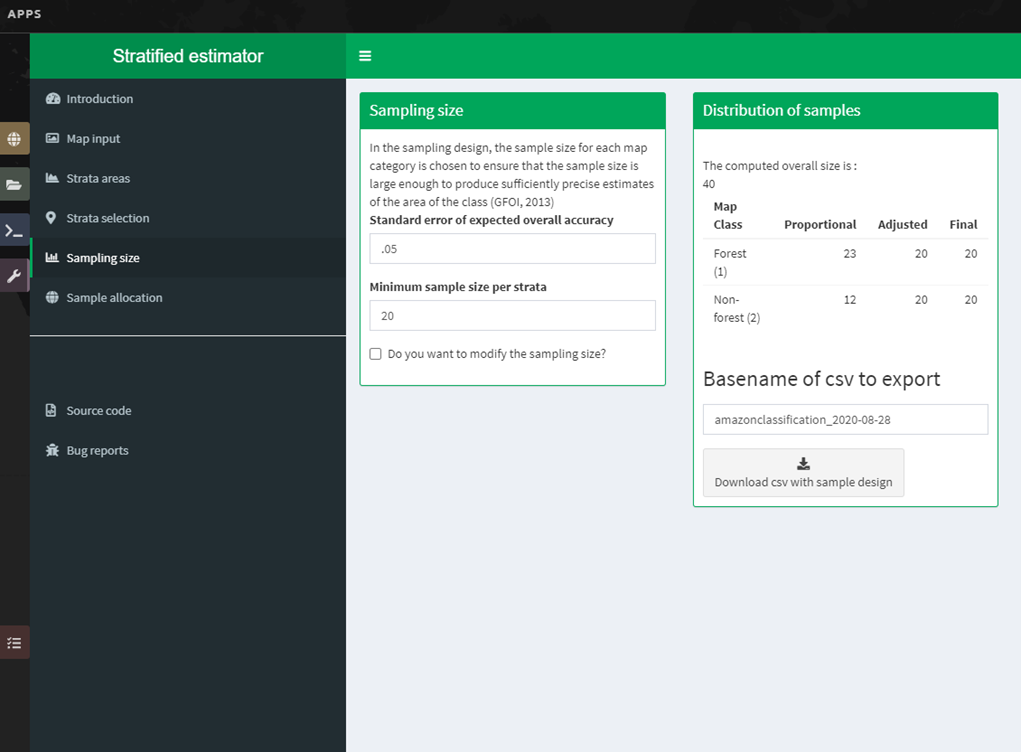

TimeSync was created by Oregon State University, Pacific Northwest Research Station, the Forest Service Department of Agriculture, and the USFS Remote Sensing Applications Center. From the TimeSync User manual for Version 3: